## Convergence Plot: Model Complexity vs. Normalized Mean Square Error (NMSE)

### Overview

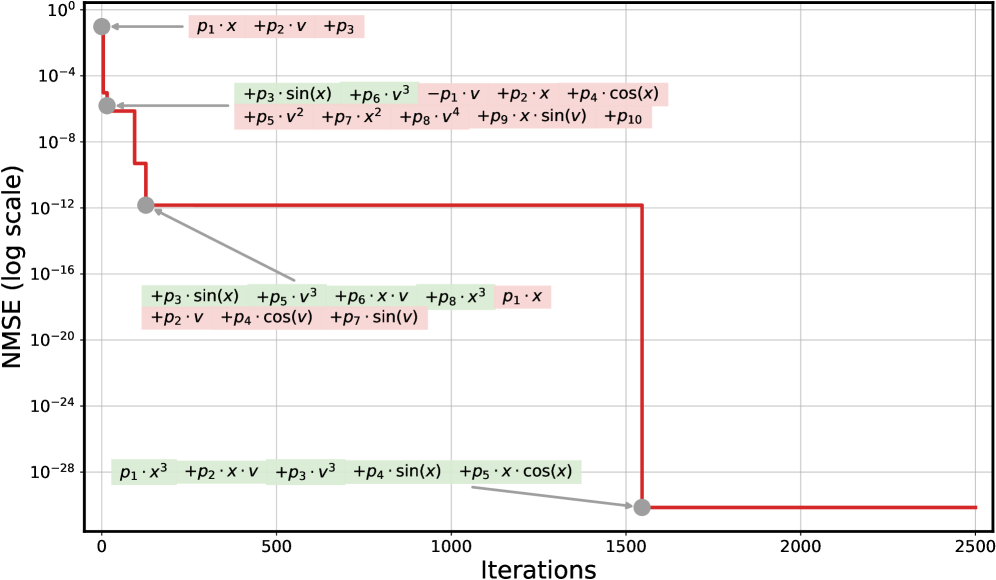

The image is a technical plot illustrating the convergence of a model's error (NMSE) over training iterations. It demonstrates how the error decreases in a stepwise fashion as increasingly complex mathematical terms are added to the model. The plot uses a logarithmic scale for the error axis to visualize the dramatic, multi-order-of-magnitude improvements.

### Components/Axes

* **Chart Type:** Step-wise convergence plot (line chart).

* **Y-Axis:**

* **Label:** `NMSE (log scale)`

* **Scale:** Logarithmic, ranging from `10^0` (1) at the top to `10^-28` at the bottom.

* **Major Ticks:** `10^0`, `10^-4`, `10^-8`, `10^-12`, `10^-16`, `10^-20`, `10^-24`, `10^-28`.

* **X-Axis:**

* **Label:** `Iterations`

* **Scale:** Linear, from `0` to `2500`.

* **Major Ticks:** `0`, `500`, `1000`, `1500`, `2000`, `2500`.

* **Data Series:** A single, solid red line representing the NMSE over iterations.

* **Annotations:** Four text boxes with mathematical expressions, connected by gray arrows to specific points on the red line. The text boxes use colored backgrounds (light green and light pink) to highlight different terms.

### Detailed Analysis

The red line shows a step-function decrease in NMSE. The error remains constant for long periods (plateaus) and then drops sharply at specific iteration points. Each drop is associated with the introduction of a new, more complex set of model terms, as indicated by the annotations.

**1. Initial State (Iteration ~0):**

* **Position:** Top-left corner of the plot.

* **NMSE Value:** Approximately `10^0` (or 1).

* **Annotation (Light Pink Background):** `p₁·x + p₂·v + p₃`

* **Trend Description:** The line starts at a high error value.

**2. First Major Drop (Iteration ~0-10):**

* **Position:** The line drops vertically from `10^0` to approximately `10^-6`.

* **NMSE Value:** Plateaus at ~`10^-6`.

* **Annotation (Mixed Green/Pink Background):**

* Green Terms: `+p₃·sin(x)`, `+p₆·v³`, `+p₅·v²`, `+p₇·x²`, `+p₈·v⁴`, `+p₉·x·sin(v)`

* Pink Terms: `-p₁·v`, `+p₂·x`, `+p₄·cos(x)`, `+p₁₀`

* **Trend Description:** A dramatic, near-vertical drop in error, followed by a long plateau.

**3. Second Major Drop (Iteration ~1500):**

* **Position:** The line drops vertically from ~`10^-6` to ~`10^-12`.

* **NMSE Value:** Plateaus at ~`10^-12`.

* **Annotation (Mixed Green/Pink Background):**

* Green Terms: `+p₃·sin(x)`, `+p₅·v³`, `+p₆·x·v`, `+p₈·x³`

* Pink Terms: `p₁·x`, `+p₂·v`, `+p₄·cos(v)`, `+p₇·sin(v)`

* **Trend Description:** Another sharp, vertical drop in error, followed by a second long plateau.

**4. Final Drop (Iteration ~1550):**

* **Position:** The line drops vertically from ~`10^-12` to a value below `10^-28`.

* **NMSE Value:** Plateaus at a very low value, appearing to be at or below `10^-30` (the axis ends at `10^-28`).

* **Annotation (Light Green Background):** `p₁·x³ + p₂·x·v + p₃·v³ + p₄·sin(x) + p₅·x·cos(x)`

* **Trend Description:** The final and most significant drop, leading to an extremely low, stable error.

### Key Observations

1. **Stepwise Convergence:** The model does not improve continuously. Error reduction occurs in discrete, massive jumps, each coinciding with a change in the model's functional form (the added terms).

2. **Magnitude of Improvement:** The total improvement in NMSE is extraordinary, spanning over 30 orders of magnitude (from `10^0` to `<10^-30`).

3. **Plateau Length:** The plateaus between drops are of similar length (~1500 iterations), suggesting a consistent training period is needed before the next model complexity level yields improvement.

4. **Annotation Color Coding:** The green highlighting in the annotations likely signifies the *newly added* terms at that stage, while pink may indicate terms carried over or modified from a previous stage. This is an inferred pattern based on visual grouping.

### Interpretation

This plot is a powerful visualization of **model selection and symbolic regression**. It demonstrates the process of discovering an accurate mathematical model for a system.

* **What it Suggests:** The data shows that a simple linear model (`p₁·x + p₂·v + p₃`) is a poor fit (high NMSE). Introducing non-linear and trigonometric terms (`sin`, `cos`, polynomials) in stages leads to exponentially better fits. The final model, a combination of cubic terms and trigonometric functions, achieves near-perfect accuracy (extremely low NMSE).

* **Relationship Between Elements:** The x-axis (Iterations) represents the computational effort or search process. The y-axis (NMSE) is the objective measure of model quality. The annotations are the "discoveries" made during the search. The stepwise drops indicate moments where the algorithm found a significantly better model structure.

* **Notable Anomalies/Trends:** The most striking trend is the **log-linear relationship between model complexity and error reduction**. Each major increase in the complexity of the term set (from linear, to including squares/cubes/trig, to a refined final set) results in a multiplicative (order-of-magnitude) decrease in error. This is characteristic of successful symbolic regression, where the correct functional form is more important than incremental parameter tuning.

* **Underlying Message:** The plot argues that for this particular problem, the underlying physical or mathematical law is complex but discoverable. It highlights the efficiency of a search algorithm that can navigate a vast space of possible equations to find a parsimonious and highly accurate solution. The final model is not the most complex one shown (the second annotation has more terms), but it is the most effective, suggesting the algorithm also performs model simplification or finds a more fundamental representation.