## Neural Network Diagram: Entity and Relation Vector Association

### Overview

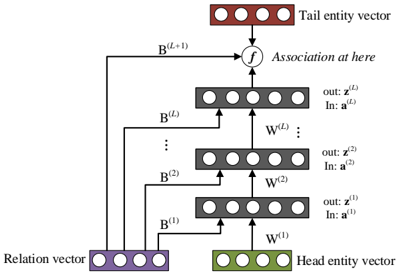

The image depicts a neural network architecture designed to associate relation vectors with head and tail entity vectors. The network consists of multiple layers, each processing input from the previous layer and relation vectors. The final layer outputs an association between the head and tail entities.

### Components/Axes

* **Input Layers:**

* **Relation vector:** Represented by a row of four purple circles at the bottom-left.

* **Head entity vector:** Represented by a row of four green circles at the bottom-right.

* **Hidden Layers:**

* A stack of L layers, each represented by a row of five gray circles. The layers are indexed from 1 to L.

* Each layer receives input from the layer below and the relation vector.

* **Output Layer:**

* **Tail entity vector:** Represented by a row of four red circles at the top.

* **Connections:**

* **W^(l):** Represents the weights connecting layer l-1 to layer l. These are represented by arrows pointing upwards between layers.

* **B^(l):** Represents the weights connecting the relation vector to layer l. These are represented by arrows pointing from the relation vector to each layer.

* **B^(L+1):** Represents the weights connecting the relation vector to the association function.

* **Association Function:**

* Represented by a circle containing the letter "f" at the top, which combines the output of the last hidden layer and the relation vector.

* **Layer Outputs:**

* Each layer l has an output z^(l) and an input a^(l).

### Detailed Analysis

* **Bottom Layer (l=1):**

* Input: a^(1)

* Output: z^(1)

* Receives input from the Head entity vector via W^(1) and the Relation vector via B^(1).

* **Intermediate Layers (l=2 to L-1):**

* Input: a^(2)

* Output: z^(2)

* Receives input from the previous layer via W^(2) and the Relation vector via B^(2).

* **Top Layer (l=L):**

* Input: a^(L)

* Output: z^(L)

* Receives input from the previous layer via W^(L) and the Relation vector via B^(L).

* **Association Layer:**

* The output of the top layer z^(L) and the Relation vector are fed into the association function "f".

* The Relation vector is connected to the association function via B^(L+1).

* The output of the association function is the Tail entity vector.

### Key Observations

* The Relation vector is used as input to every layer of the network, suggesting it plays a crucial role in determining the association between the head and tail entities.

* The network architecture is a feed-forward neural network with skip connections from the Relation vector to each layer.

* The association function "f" is a key component that combines the output of the final hidden layer and the Relation vector to produce the Tail entity vector.

### Interpretation

This diagram illustrates a neural network model designed to learn relationships between entities. The model takes a head entity vector and a relation vector as input and predicts the corresponding tail entity vector. The use of skip connections from the relation vector to each layer allows the model to incorporate relational information at multiple levels of abstraction. The association function "f" likely performs a non-linear transformation to combine the outputs of the hidden layers and the relation vector, enabling the model to capture complex relationships between entities. The model could be used for tasks such as knowledge graph completion, relation extraction, and question answering.