\n

## Diagram: LLM Fine-tuning and Subtask Decomposition

### Overview

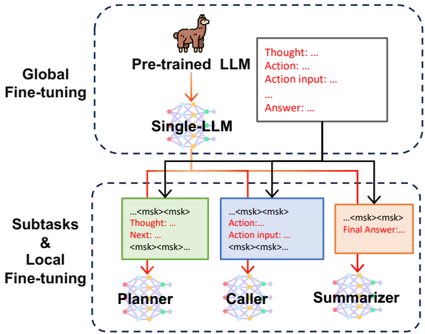

The image is a diagram illustrating a process of fine-tuning a Large Language Model (LLM) and decomposing tasks into subtasks handled by specialized modules. It depicts a two-stage process: Global Fine-tuning and Subtasks & Local Fine-tuning. The diagram uses a network-like structure with arrows indicating the flow of information.

### Components/Axes

The diagram is divided into two main sections, visually separated by dashed blue rectangles:

* **Global Fine-tuning:** Located at the top of the image.

* **Subtasks & Local Fine-tuning:** Located at the bottom of the image.

Key components within these sections include:

* **Pre-trained LLM:** Represented by a llama icon and the text "Pre-trained LLM".

* **Single-LLM:** A network-like structure representing the LLM after global fine-tuning.

* **Planner:** A network-like structure with a green box containing text "...<msk><msk> Thought: ... Next: ...<msk>".

* **Caller:** A network-like structure with a blue box containing text "...<msk><msk> Action: ... Action input: ...<msk>".

* **Summarizer:** A network-like structure with an orange box containing text "...<msk><msk> Final Answer: ...".

* **Thought/Action/Answer:** A white box with red text "Thought: ...", blue text "Action: ...", and red text "Action input: ...", and red text "Answer: ...".

Arrows indicate the flow of information between these components.

### Detailed Analysis or Content Details

The diagram shows the following flow:

1. **Pre-trained LLM to Single-LLM:** A red arrow points from the llama icon (Pre-trained LLM) to the "Single-LLM" network.

2. **Single-LLM to Subtask Modules:** Three arrows originate from the "Single-LLM" network:

* A red arrow points to the "Planner".

* An orange arrow points to the "Caller".

* A blue arrow points to the "Summarizer".

3. **Subtask Modules:** Each subtask module (Planner, Caller, Summarizer) is a network-like structure.

* The "Planner" contains the text "...<msk><msk> Thought: ... Next: ...<msk>".

* The "Caller" contains the text "...<msk><msk> Action: ... Action input: ...<msk>".

* The "Summarizer" contains the text "...<msk><msk> Final Answer: ...".

4. **Single-LLM to Thought/Action/Answer:** A white box with red text "Thought: ...", blue text "Action: ...", and red text "Action input: ...", and red text "Answer: ..." is connected to the "Single-LLM" network.

The text "<msk><msk>" appears repeatedly within the subtask modules, likely representing masked tokens or placeholders.

### Key Observations

* The diagram highlights a two-stage fine-tuning process.

* The "Single-LLM" acts as a central hub, distributing tasks to specialized modules.

* Each subtask module focuses on a specific aspect of the overall task (planning, action execution, summarization).

* The use of "<msk><msk>" suggests a focus on token manipulation or generation.

### Interpretation

The diagram illustrates a method for improving LLM performance by combining global fine-tuning with task decomposition. The initial "Global Fine-tuning" stage prepares the LLM for a broader range of tasks. Subsequently, "Subtasks & Local Fine-tuning" breaks down complex tasks into smaller, more manageable subtasks, each handled by a specialized module. This approach allows for more targeted fine-tuning and potentially improves the accuracy and efficiency of the LLM. The llama icon likely represents a specific LLM architecture. The use of masked tokens suggests a focus on generative modeling or sequence-to-sequence tasks. The diagram suggests a modular approach to LLM development, where different modules can be trained and optimized independently. The flow of information from the "Single-LLM" to the subtask modules indicates that the LLM acts as a coordinator, directing the overall process. The diagram does not provide any quantitative data or performance metrics. It is a conceptual illustration of a system architecture.