## Bar Chart: Performance Comparison of R1-distilled and RRM Models

### Overview

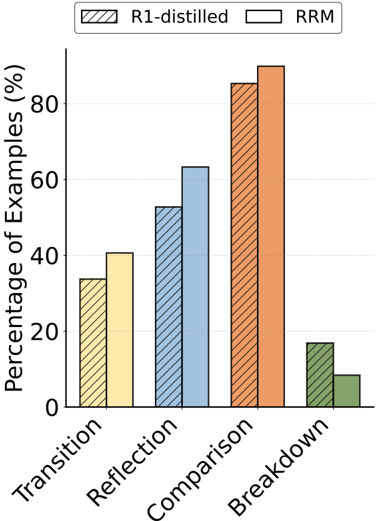

The image is a grouped bar chart comparing the performance of two models, **R1-distilled** (striped pattern) and **RRM** (solid color), across four categories: **Transition**, **Reflection**, **Comparison**, and **Breakdown**. The y-axis represents the **Percentage of Examples (%)**, ranging from 0% to 100%. Each category contains two bars, one for each model, with approximate values extracted from the chart.

### Components/Axes

- **X-axis (Categories)**:

- Transition

- Reflection

- Comparison

- Breakdown

- **Y-axis (Values)**:

- Percentage of Examples (%) from 0% to 100% in 20% increments.

- **Legend**:

- **R1-distilled**: Striped pattern (orange).

- **RRM**: Solid color (blue).

- **Legend Position**: Top-right corner of the chart.

### Detailed Analysis

1. **Transition**:

- R1-distilled: ~35% (striped orange bar).

- RRM: ~40% (solid blue bar).

2. **Reflection**:

- R1-distilled: ~50% (striped orange bar).

- RRM: ~60% (solid blue bar).

3. **Comparison**:

- R1-distilled: ~85% (striped orange bar).

- RRM: ~90% (solid blue bar).

4. **Breakdown**:

- R1-distilled: ~15% (striped orange bar).

- RRM: ~10% (solid blue bar).

### Key Observations

- **RRM outperforms R1-distilled** in **Reflection** (+10%) and **Comparison** (+5%).

- **R1-distilled** has a slight edge in **Breakdown** (+5%).

- **Comparison** is the highest-performing category for both models, with RRM achieving ~90%.

- **Breakdown** is the lowest-performing category for both models, with RRM at ~10%.

### Interpretation

The data suggests that **RRM** is more effective in **Reflection** and **Comparison** tasks, likely due to its ability to handle complex reasoning or contextual analysis. **R1-distilled** performs better in **Breakdown** scenarios, possibly indicating a focus on simpler or more structured tasks. The stark contrast in **Comparison** (85% vs. 90%) highlights RRM's superior capability in synthesizing or evaluating information. The **Breakdown** anomaly may reflect differences in training data or model architecture, warranting further investigation into why R1-distilled excels here. Overall, RRM demonstrates broader utility across most categories, while R1-distilled has niche strengths.