# Technical Data Extraction: Model Performance vs. Scaled Parameters

This document contains a detailed extraction of data from two scatter plots comparing various model pruning and merging methods across different base models.

## 1. General Metadata and Layout

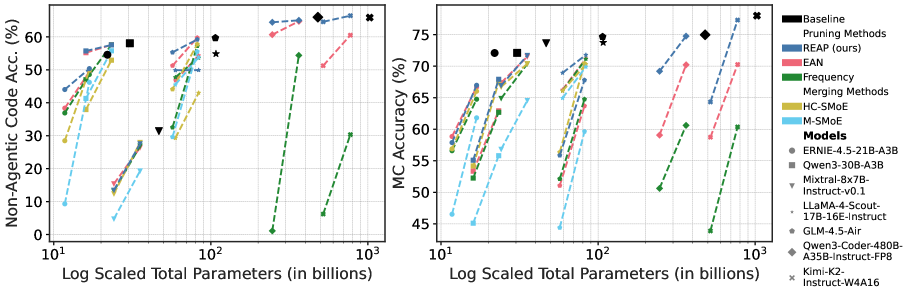

* **Image Structure:** Two side-by-side scatter plots with a shared legend on the far right.

* **X-Axis (Shared):** "Log Scaled Total Parameters (in billions)". The scale is logarithmic, ranging from $10^1$ to $10^3$.

* **Y-Axis (Left Plot):** "Non-Agentic Code Acc. (%)". Range: 0 to 70.

* **Y-Axis (Right Plot):** "MC Accuracy (%)". Range: 45 to 80.

* **Legend Location:** Right-hand side of the image.

---

## 2. Legend and Categorization

### A. Baseline Models (Black Markers)

These represent the uncompressed/original models.

* **Circle (●):** ERNIE-4.5-21B-A3B

* **Square (■):** Qwen3-30B-A3B

* **Inverted Triangle (▼):** Mixtral-8x7B-Instruct-v0.1

* **Star (★):** LLaMA-4-Scout-17B-16E-Instruct

* **Pentagon (⬟):** GLM-4.5-Air

* **Diamond (◆):** Qwen3-Coder-480B-A35B-Instruct-FP8

* **Cross (✖):** Kimi-K2-Instruct-W4A16

### B. Pruning Methods (Dashed Lines with Markers)

* **REAP (ours) [Blue]:** Consistently the highest-performing pruning method across most parameter scales.

* **EAN [Pink]:** Generally follows REAP but at a slightly lower accuracy level.

* **Frequency [Green]:** Shows the steepest performance drop as parameters are reduced; often the lowest-performing pruning method.

### C. Merging Methods (Dashed Lines with Markers)

* **HC-SMoE [Gold/Yellow]:** Mid-tier performance.

* **M-SMoE [Light Blue]:** Generally the lowest-performing merging method, showing significant accuracy degradation at lower parameter counts.

---

## 3. Data Trends and Analysis

### Left Plot: Non-Agentic Code Acc. (%)

* **General Trend:** All methods show a positive correlation between the number of parameters and accuracy. Pruning/merging from a larger base model (e.g., the 480B diamond series) results in higher accuracy than smaller base models, even when scaled to the same total parameter count.

* **REAP Performance:** In the cluster around $10^1$ to $10^2$ parameters, REAP (blue) maintains accuracy closest to the black baseline markers.

* **Frequency Method Drop-off:** For the 480B model (diamond), the Frequency method (green) drops from ~55% accuracy at ~300B parameters to near 0% accuracy when scaled down to ~250B parameters, indicating high sensitivity.

### Right Plot: MC Accuracy (%)

* **General Trend:** Similar to the left plot, accuracy increases with parameter count. The "Pareto front" is defined by the Baseline models (black) and the REAP pruning method (blue).

* **Method Comparison:**

* **REAP (Blue)** consistently stays at the top of each model's cluster.

* **EAN (Pink)** and **HC-SMoE (Gold)** occupy the middle ground.

* **M-SMoE (Light Blue)** and **Frequency (Green)** consistently show the worst performance retention.

* **Scaling Observations:** For the Kimi-K2 (cross) and Qwen3-Coder (diamond) models at the $10^2$ to $10^3$ scale, REAP maintains >70% MC Accuracy, while Frequency and M-SMoE drop toward 60% or lower as they are compressed.

---

## 4. Component Isolation and Spatial Grounding

| Region | Content Description |

| :--- | :--- |

| **Header** | No formal title text; axes provide the context. |

| **Left Chart** | Focuses on Code Accuracy. Shows 4 distinct clusters of models being compressed. |

| **Right Chart** | Focuses on Multiple Choice (MC) Accuracy. Shows 3 distinct clusters of models being compressed. |

| **Footer** | X-axis labels: $10^1$, $10^2$, $10^3$ (Log scale). |

| **Right Sidebar** | Legend containing 2 categories of methods (Pruning, Merging) and 7 specific model identifiers. |

## 5. Precise Marker Mapping

* **High-End ($10^3$):** The Kimi-K2 (✖) baseline is at ~78% MC Accuracy. The REAP version (blue ✖) is slightly below it, while the Frequency version (green ✖) is significantly lower at ~60%.

* **Mid-Range ($10^2$):** The GLM-4.5-Air (⬟) baseline is at ~60% Code Acc. and ~74% MC Acc.

* **Low-End ($10^1$):** Models compressed to ~15B parameters show a wide spread, with REAP holding ~45% Code Acc. while M-SMoE drops below 10%.