TECHNICAL ASSET FINGERPRINT

f49020e403e56bc433c93c00

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

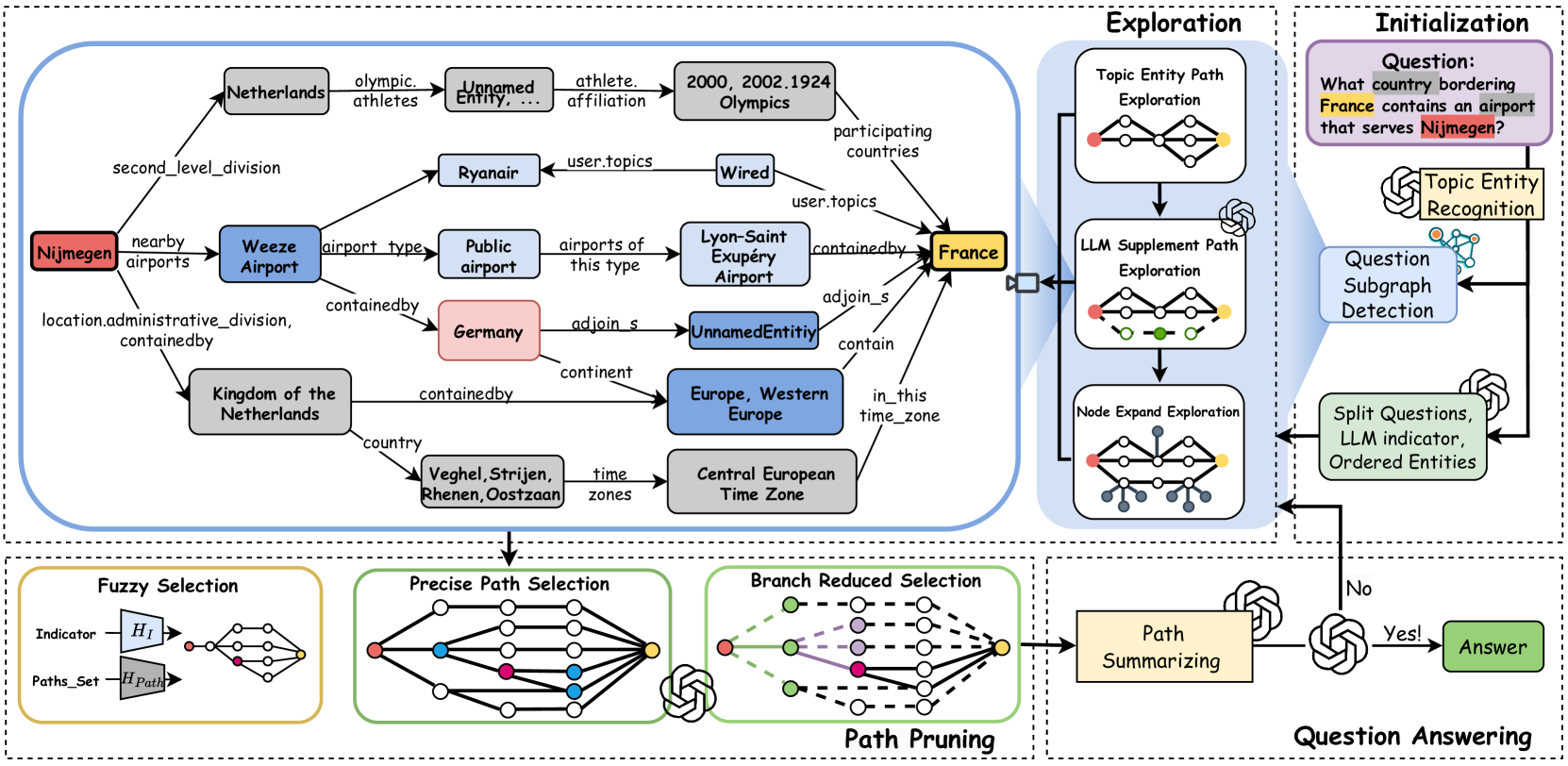

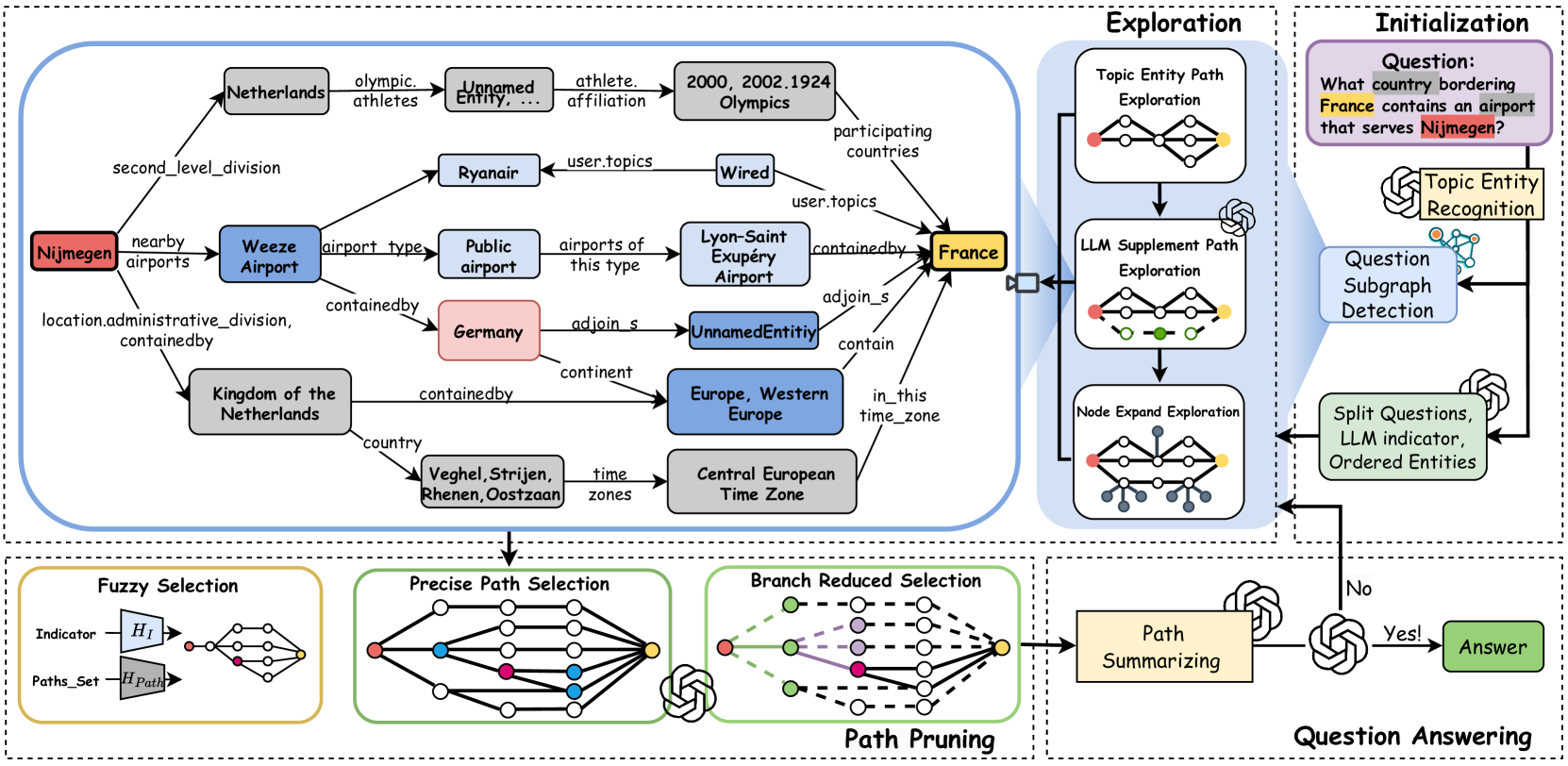

## Technical Diagram: Knowledge Graph-Based Question Answering System

### Overview

This image is a detailed technical diagram illustrating a multi-stage system for answering complex natural language questions by exploring and reasoning over a knowledge graph. The system uses a combination of graph traversal, Large Language Model (LLM) assistance, and path selection/pruning techniques. The diagram is divided into four main dashed-line boxes representing sequential phases: **Initialization**, **Exploration**, **Path Pruning**, and **Question Answering**. A large, central knowledge graph sub-diagram is the focal point of the Exploration phase.

### Components/Axes (System Phases & Modules)

**1. Initialization (Top-Right Box)**

* **Question:** "What country bordering **France** contains an airport that serves **Nijmegen**?" (The words "France" and "Nijmegen" are highlighted in yellow and red, respectively).

* **Components:**

* **Topic Entity Recognition:** A module icon (brain with nodes) processes the question.

* **Question Subgraph Detection:** A module icon (network graph) follows.

* **Split Questions, LLM indicator, Ordered Entities:** A green box outputs processed question components.

* **Flow:** Arrows indicate the question feeds into Topic Entity Recognition, then to Question Subgraph Detection, and finally to the Split Questions module.

**2. Exploration (Top-Center Box)**

* **Components (Three Exploration Strategies):**

* **Topic Entity Path Exploration:** A small diagram showing a path from a red node to a yellow node through white nodes.

* **LLM Supplement Path Exploration:** A small diagram showing multiple paths (red, green, yellow nodes) with an LLM icon (brain) connected.

* **Node Expand Exploration:** A small diagram showing a central node expanding to many surrounding nodes.

* **Flow:** These three exploration modules feed into the large central knowledge graph diagram.

**3. Central Knowledge Graph (Main Diagram within Exploration)**

This is a directed graph with labeled edges. Nodes are colored boxes, and edges are arrows with relation labels.

* **Key Nodes (Entities):**

* **Nijmegen** (Red box, left side)

* **Weeze Airport** (Blue box)

* **Germany** (Pink box)

* **France** (Yellow box, right side)

* **Kingdom of the Netherlands** (Grey box)

* **Europe, Western Europe** (Blue box)

* **Central European Time Zone** (Grey box)

* **Lyon-Saint Exupéry Airport** (Blue box)

* **Public airport** (Grey box)

* **Ryanair** (Grey box)

* **Wired** (Grey box)

* **2000, 2002, 1924 Olympics** (Grey box)

* **Unnamed Entity, ...** (Grey box, appears twice)

* **Veghel, Strijen, Rhenen, Oostzaan** (Grey box)

* **Key Edges (Relations):**

* `nearby_airports` (Nijmegen -> Weeze Airport)

* `airport_type` (Weeze Airport -> Public airport)

* `containedby` (Weeze Airport -> Germany)

* `adjoin_s` (Germany -> UnnamedEntity; UnnamedEntity -> France)

* `continent` (Germany -> Europe, Western Europe)

* `containedby` (Kingdom of the Netherlands -> Europe, Western Europe)

* `country` (Kingdom of the Netherlands -> Veghel, Strijen, Rhenen, Oostzaan)

* `time_zones` (Veghel... -> Central European Time Zone)

* `in_this_time_zone` (France -> Central European Time Zone)

* `airports_of_this_type` (Public airport -> Lyon-Saint Exupéry Airport)

* `containedby` (Lyon-Saint Exupéry Airport -> France)

* `user_topics` (Wired -> Ryanair; Wired -> France)

* `olympic_athletes` (Nijmegen -> Unnamed Entity, ...)

* `athlete_affiliation` (Unnamed Entity... -> 2000, 2002, 1924 Olympics)

* `participating_countries` (2000... Olympics -> France)

* `second_level_division` (Nijmegen -> Netherlands)

* `location.administrative_division, containedby` (Nijmegen -> Kingdom of the Netherlands)

**4. Path Pruning (Bottom-Center Box)**

* **Components (Three Selection/Pruning Stages):**

* **Fuzzy Selection:** Takes `Indicator` (H_I) and `Paths_Set` (H_Path) as input, outputs a simplified graph.

* **Precise Path Selection:** Shows a more complex graph with colored nodes (red, blue, yellow) and an LLM icon.

* **Branch Reduced Selection:** Shows a pruned graph with dashed lines indicating removed branches, colored nodes (green, purple, red, yellow), and an LLM icon.

* **Flow:** The output of the central knowledge graph feeds into Fuzzy Selection, then to Precise Path Selection, then to Branch Reduced Selection.

**5. Question Answering (Bottom-Right Box)**

* **Components:**

* **Path Summarizing:** A beige box with an LLM icon.

* **Decision Diamond:** A diamond shape with "Yes!" and "No" outputs.

* **Answer:** A green box.

* **Flow:** The pruned paths from the previous stage go to Path Summarizing. The output goes to a decision point (likely checking if a valid answer path is found). If "Yes!", it proceeds to the final **Answer**. If "No", an arrow loops back to the **Split Questions, LLM indicator, Ordered Entities** module in the Initialization phase, suggesting an iterative refinement process.

### Detailed Analysis

The diagram meticulously maps the process of answering the sample question: "What country bordering France contains an airport that serves Nijmegen?"

1. **Initialization:** The system identifies key entities ("France", "Nijmegen") and decomposes the question.

2. **Exploration:** It explores the knowledge graph starting from these entities. The central graph shows potential paths:

* From **Nijmegen** to **Weeze Airport** (`nearby_airports`).

* From **Weeze Airport** to **Germany** (`containedby`).

* From **Germany** to **France** via an `adjoin_s` (adjoins) relation through an `UnnamedEntity`.

* This path (Nijmegen -> Weeze Airport -> Germany -> France) appears to satisfy the question's conditions: Germany borders France and contains an airport (Weeze) that serves Nijmegen.

3. **Path Pruning:** The system evaluates and selects the most relevant paths from the explored graph using fuzzy, precise, and branch-reduction techniques, likely leveraging LLMs for semantic understanding.

4. **Question Answering:** The selected path is summarized to generate the final answer ("Germany").

### Key Observations

* **Color Coding:** Entities are color-coded: Red (Nijmegen - source), Yellow (France - target), Blue (Airports/Regions), Pink (Germany - candidate answer), Grey (other entities).

* **Iterative Loop:** The "No" path from the answer decision back to initialization indicates the system can retry with refined parameters if an answer isn't found.

* **LLM Integration:** LLM icons are present in Exploration (supplement paths), Path Pruning (Precise and Branch Reduced Selection), and Path Summarizing, indicating they are used for reasoning, path evaluation, and answer generation.

* **Graph Complexity:** The central knowledge graph contains multiple, potentially distracting paths (e.g., connections to Olympics, Ryanair, time zones) that the pruning stages must filter out.

### Interpretation

This diagram represents a sophisticated **neuro-symbolic AI system** for question answering. It combines the structured reasoning of knowledge graphs (symbolic) with the flexible language understanding of Large Language Models (neuro).

* **What it demonstrates:** The system can break down a complex, multi-hop natural language question into a graph traversal problem. It explores a wide neighborhood of relevant entities, then uses learned heuristics (via LLMs and selection algorithms) to prune irrelevant information and converge on the most plausible answer path.

* **How elements relate:** The phases form a pipeline: **Parse -> Explore -> Prune -> Answer**. The central knowledge graph is the shared data structure manipulated by each phase. The LLMs act as reasoning engines within the symbolic framework, guiding exploration and selection.

* **Notable aspects:** The inclusion of "Fuzzy Selection" suggests handling of uncertainty or partial matches in the graph. The iterative loop highlights the system's robustness, allowing for re-attempts. The presence of extraneous nodes (like "Wired" or "Olympics") in the exploration graph shows the system's challenge: distinguishing relevant from irrelevant connections in a densely connected knowledge base. The final answer ("Germany") is derived not from a direct "serves" relation but from a chain of inferences (`nearby_airports` -> `containedby` -> `adjoin_s`), showcasing the system's ability to perform compositional reasoning.

DECODING INTELLIGENCE...