## Screenshot: AI Tutoring Chat Interface with Math Problem and Annotated Response

### Overview

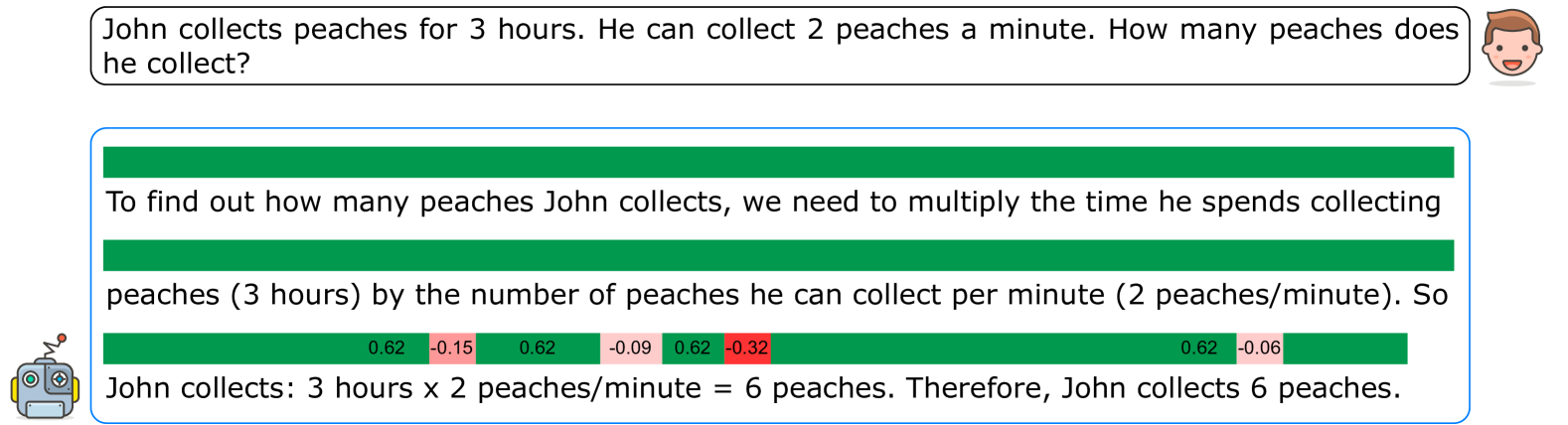

The image is a screenshot of a chat interface, likely from an educational or AI tutoring application. It displays a user's math word problem and an AI-generated response. The response includes an incorrect solution to the problem, and beneath the text, there is a visualization of colored bars with numerical values, which appear to be token-level confidence scores or probability outputs from the language model that generated the response.

### Components/Axes

1. **User Query Bubble (Top):**

* **Position:** Top of the image, spanning most of the width.

* **Content:** A rounded rectangle with a light gray border containing the user's question.

* **Icon:** A cartoon-style user avatar (a smiling person's head) is positioned to the right of the bubble.

* **Text:** "John collects peaches for 3 hours. He can collect 2 peaches a minute. How many peaches does he collect?"

2. **AI Response Box (Center):**

* **Position:** Below the user query, centered.

* **Content:** A larger rounded rectangle with a light blue border containing the AI's step-by-step response.

* **Icon:** A cartoon-style robot avatar is positioned to the left of the box.

* **Text:** The response is split into three lines of text, interspersed with colored bars.

3. **Token Confidence/Probability Bars (Embedded in Response):**

* **Position:** Located between the lines of text within the AI response box.

* **Description:** Horizontal bars composed of adjacent colored segments (green and red/pink). Each segment contains a numerical value.

* **Values (from left to right):** `0.62` (green), `-0.15` (red), `0.62` (green), `-0.09` (pink), `0.62` (green), `-0.32` (red), `0.62` (green), `-0.06` (pink).

* **Note:** The exact mapping of these values to specific words or tokens in the adjacent text is not explicitly labeled, but they are visually aligned with the text flow.

### Detailed Analysis / Content Details

* **Full Transcription of AI Response Text:**

"To find out how many peaches John collects, we need to multiply the time he spends collecting peaches (3 hours) by the number of peaches he can collect per minute (2 peaches/minute). So John collects: 3 hours x 2 peaches/minute = 6 peaches. Therefore, John collects 6 peaches."

* **Mathematical Analysis of the Problem:**

* **Given:** Time = 3 hours, Rate = 2 peaches/minute.

* **Required Calculation:** To find total peaches, time and rate must use the same unit. The correct method is to convert hours to minutes (3 hours * 60 minutes/hour = 180 minutes), then multiply by the rate (180 minutes * 2 peaches/minute = 360 peaches).

* **AI's Calculation:** The AI incorrectly multiplies 3 hours directly by 2 peaches/minute, resulting in 6 peaches. This is a unit mismatch error.

* **Confidence Bar Analysis:**

* The bars show a pattern of high positive values (`0.62`) interspersed with smaller negative values.

* The highest negative value (`-0.32`) is the sixth segment, which may correspond to a point of low confidence or error in the generation process, potentially aligning with the flawed calculation step.

### Key Observations

1. **Critical Mathematical Error:** The AI's solution is fundamentally incorrect due to a failure to perform unit conversion (hours to minutes). The correct answer should be 360 peaches, not 6.

2. **Visualization of Model Uncertainty:** The colored bars provide a rare internal view of the language model's generation process, showing fluctuating confidence scores for different tokens. The presence of negative scores suggests points where the model's predicted probability was low or contradicted by context.

3. **Interface Design:** The layout is clean and typical of a chatbot, using avatars and bordered boxes to distinguish between user and system messages.

### Interpretation

This image serves as a clear case study in the limitations of large language models (LLMs) when performing multi-step reasoning, particularly with unit conversions. The AI correctly identifies the need to multiply time by rate but fails at the crucial step of ensuring dimensional consistency, a common pitfall in word problems.

The embedded confidence bars are particularly insightful. They suggest the model may have had low confidence (negative scores) at specific points in its reasoning chain, possibly during the formulation of the incorrect equation. This visualization could be a debugging tool for developers to identify where a model's reasoning breaks down.

The discrepancy between the confident, explanatory tone of the text and the underlying mathematical error highlights a key challenge in AI safety and reliability: models can present incorrect information with high fluency and apparent certainty. This underscores the importance of human oversight and verification, especially in educational or technical contexts. The image doesn't just show a wrong answer; it provides a glimpse into the "black box," showing that the model's internal uncertainty did not prevent it from outputting a confidently stated, yet false, conclusion.