TECHNICAL ASSET FINGERPRINT

f54284d3bf3529a45aa8a9e2

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

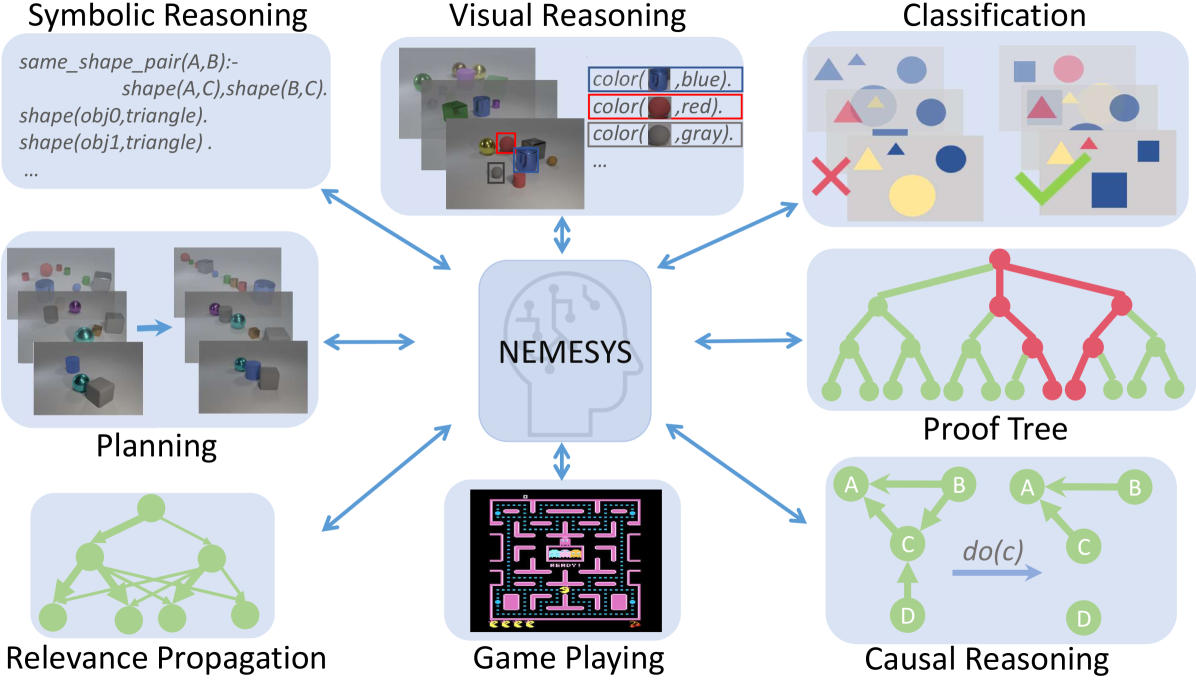

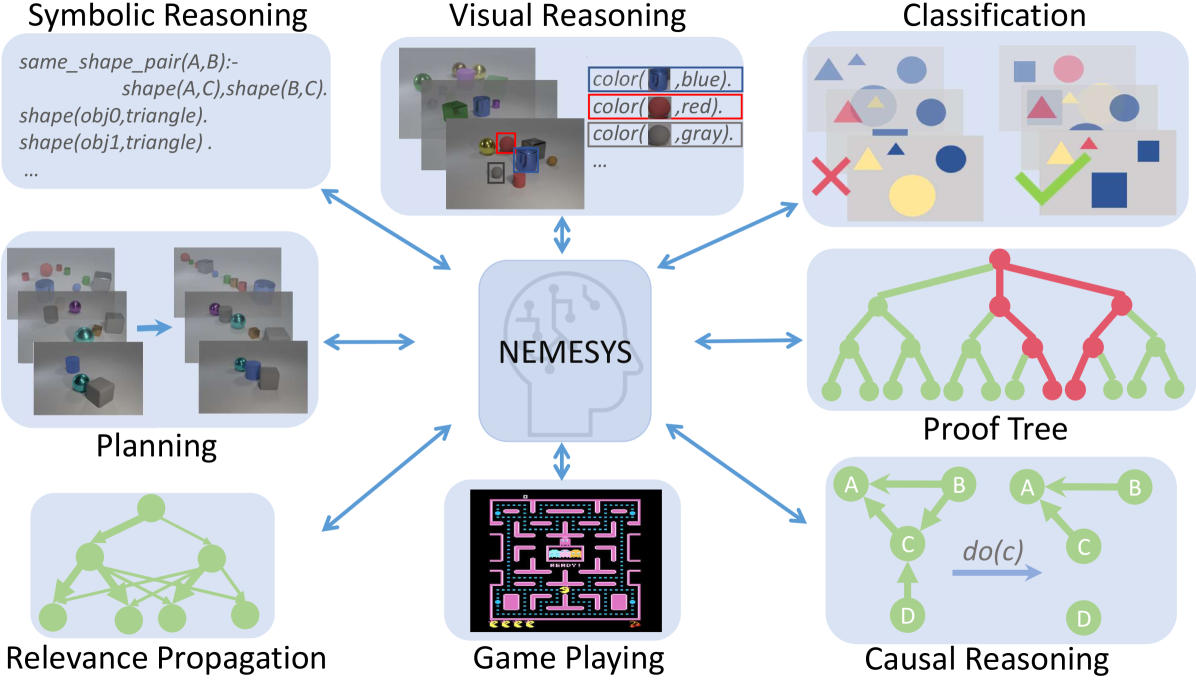

## Diagram: NEMESYS - A Multi-Modal Reasoning System Architecture

### Overview

The image is a conceptual diagram illustrating a central artificial intelligence system named **NEMESYS**, which interacts bidirectionally with eight distinct reasoning or task modules. The diagram is designed to showcase the system's capability to integrate and process diverse types of cognitive and problem-solving tasks. The overall layout features a central hub with eight surrounding modules, each connected to the center by double-headed blue arrows, indicating a two-way flow of information or processing.

### Components/Axes

The diagram is composed of nine primary components arranged in a radial pattern:

1. **Central Hub:**

* **Label:** "NEMESYS"

* **Visual:** A stylized icon of a human head in profile, filled with a light blue color and overlaid with a white circuit board pattern, symbolizing an artificial brain or cognitive architecture.

* **Position:** Center of the image.

2. **Surrounding Modules (8 total):** Each module is contained within a light blue, rounded rectangular box and is connected to the central NEMESYS hub by a blue, double-headed arrow.

* **Top-Left:** **Symbolic Reasoning**

* **Top-Center:** **Visual Reasoning**

* **Top-Right:** **Classification**

* **Right-Center:** **Proof Tree**

* **Bottom-Right:** **Causal Reasoning**

* **Bottom-Center:** **Game Playing**

* **Bottom-Left:** **Relevance Propagation**

* **Left-Center:** **Planning**

### Detailed Analysis / Content Details

Each module contains specific visual and textual content representing its function:

* **Symbolic Reasoning (Top-Left):**

* **Content:** Contains text resembling Prolog or logical programming code.

* **Transcribed Text:**

```

same_shape_pair(A,B):-

shape(A,C),shape(B,C).

shape(obj0,triangle).

shape(obj1,triangle).

...

```

* **Visual Reasoning (Top-Center):**

* **Content:** A composite image showing a 3D scene with various colored objects (spheres, cubes, cylinders) on a surface. Overlaid on the right is a table-like structure with color swatches and labels.

* **Transcribed Text (Table):**

```

color( [blue swatch], blue).

color( [red swatch], red).

color( [gray swatch], gray).

...

```

* **Classification (Top-Right):**

* **Content:** Two panels comparing classification outcomes. The left panel shows various shapes (triangles, circles, squares) with a large red "X" over a yellow triangle, indicating misclassification. The right panel shows similar shapes with a large green checkmark over a yellow triangle, indicating correct classification.

* **Proof Tree (Right-Center):**

* **Content:** A hierarchical tree diagram. The root and some nodes are colored red, while the majority of leaf nodes are green. This likely represents a logical deduction or search tree where red nodes might indicate a failed path or a specific branch of interest, and green nodes indicate successful or explored paths.

* **Causal Reasoning (Bottom-Right):**

* **Content:** A directed acyclic graph (DAG) with nodes labeled A, B, C, D. Arrows show causal relationships (e.g., A -> C, B -> C, C -> D). A separate arrow labeled "do(c)" points from a version of the graph where node C is intervened upon (highlighted), illustrating the concept of causal intervention.

* **Game Playing (Bottom-Center):**

* **Content:** A screenshot from the classic arcade game **Pac-Man**. It shows the game maze, Pac-Man, ghosts, dots, and power pellets.

* **Relevance Propagation (Bottom-Left):**

* **Content:** A network graph with interconnected green nodes. The structure suggests a neural network or a graph where information or "relevance" is propagated between nodes.

* **Planning (Left-Center):**

* **Content:** A sequence of four 3D rendered scenes showing the progressive rearrangement of objects (cubes, spheres) on a surface. An arrow points from the first scene to the last, indicating a plan or sequence of actions to achieve a goal state.

### Key Observations

1. **Bidirectional Integration:** Every module has a direct, two-way connection to the central NEMESYS system, emphasizing that it is not a simple pipeline but an integrated architecture where the core system and specialized modules constantly interact.

2. **Diversity of Tasks:** The diagram explicitly covers a wide spectrum of AI challenges: logical reasoning (Symbolic, Proof Tree), perception (Visual Reasoning, Classification), sequential decision-making (Planning, Game Playing), and relational modeling (Causal Reasoning, Relevance Propagation).

3. **Visual Metaphors:** Each module uses a distinct visual metaphor appropriate to its domain (code for symbolic, 3D scenes for planning/visual, graphs for causal/relevance, a game screenshot for playing).

4. **Color Coding:** The use of color is functional: red/green in Proof Tree and Classification denotes success/failure or different states; the consistent blue of the central hub and arrows unifies the diagram.

### Interpretation

This diagram presents **NEMESYS** as a proposed unified cognitive architecture designed to tackle artificial general intelligence (AGI) by integrating multiple, traditionally separate, AI sub-fields. The central "brain" icon suggests it acts as a central executive or common substrate.

* **What it suggests:** The architecture implies that robust intelligence requires the synergistic combination of different reasoning types. For instance, solving a complex real-world problem might require **Visual Reasoning** to perceive the scene, **Symbolic Reasoning** to represent knowledge, **Causal Reasoning** to understand effects of actions, and **Planning** to devise a solution sequence.

* **How elements relate:** The bidirectional arrows are the most critical relational element. They indicate that NEMESYS both *utilizes* the capabilities of each module (e.g., sending perceptual data to the Classification module) and *informs or trains* them (e.g., using high-level symbolic knowledge to guide visual attention). The modules are not isolated silos but components of a greater whole.

* **Notable Anomalies/Outliers:** The **Game Playing (Pac-Man)** module stands out as a specific, well-defined benchmark environment, whereas the others are more abstract reasoning tasks. This may indicate that the system is validated on both standardized benchmarks and open-ended reasoning problems. The **Proof Tree** and **Relevance Propagation** graphs are visually similar but labeled differently, hinting at a distinction between explicit logical proof search and implicit, possibly neural, information flow.

In essence, the diagram is a blueprint for a holistic AI system, arguing that the path to more general intelligence lies not in perfecting a single algorithm but in architecting a framework where diverse cognitive modules collaborate through a central, integrative core.

DECODING INTELLIGENCE...