## Line Chart: L1-LLaMA | MATH500

### Overview

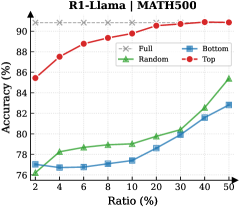

The image is a line chart comparing the performance (accuracy) of four different data selection strategies for a model named "L1-LLaMA" on the "MATH500" benchmark. The chart plots accuracy against an increasing ratio of data used.

### Components/Axes

* **Chart Title:** "L1-LLaMA | MATH500" (located at the top center).

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 76 to 90, with major tick marks at 76, 78, 80, 82, 84, 86, 88, and 90.

* **X-Axis:** Labeled "Ratio (%)". The scale is non-linear, with marked points at 2, 4, 6, 8, 10, 20, 30, 40, and 50.

* **Legend:** Located in the bottom-right quadrant of the chart area. It defines four data series:

* **Full:** Gray line with 'x' markers.

* **Random:** Green line with upward-pointing triangle markers.

* **Bottom:** Blue line with square markers.

* **Top:** Red line with circle markers.

### Detailed Analysis

The chart displays four distinct performance trends:

1. **Full (Gray, 'x'):** This series represents a baseline or upper bound. It shows a constant, high accuracy of approximately **90%** across all data ratios from 2% to 50%. The line is perfectly horizontal.

2. **Top (Red, circles):** This series shows the highest performance among the variable strategies. It starts at an accuracy of approximately **86%** at a 2% ratio and demonstrates a steady, logarithmic-like increase, approaching the "Full" baseline. Key approximate data points:

* 2%: ~86%

* 10%: ~88.5%

* 20%: ~89.5%

* 50%: ~90% (nearly converging with the "Full" line).

3. **Random (Green, triangles):** This series shows moderate performance that improves with more data. It starts at approximately **77%** at a 2% ratio and increases in a roughly linear fashion. Key approximate data points:

* 2%: ~77%

* 10%: ~79%

* 20%: ~80%

* 50%: ~85%.

4. **Bottom (Blue, squares):** This series shows the lowest performance. It starts at approximately **76%** at a 2% ratio and increases slowly. Key approximate data points:

* 2%: ~76%

* 10%: ~77%

* 20%: ~78.5%

* 50%: ~82%.

### Key Observations

* **Performance Hierarchy:** There is a clear and consistent performance hierarchy: **Full > Top > Random > Bottom**. This order is maintained at every data ratio point.

* **Convergence:** The "Top" strategy's performance rapidly converges toward the "Full" baseline, nearly matching it by a 50% data ratio. The "Random" and "Bottom" strategies remain significantly below this baseline even at 50% data.

* **Diminishing Returns:** The "Top" curve shows strong diminishing returns; the largest accuracy gains occur between 2% and 10% ratio, with much smaller gains thereafter.

* **Relative Improvement:** From 2% to 50% ratio, the "Bottom" strategy improves by ~6 percentage points, "Random" by ~8 points, and "Top" by ~4 points (though it starts from a much higher base).

### Interpretation

This chart demonstrates the critical impact of **data selection quality** on the fine-tuning or training efficiency of the L1-LLaMA model on the MATH500 task.

* **The "Top" strategy is highly effective.** Using only the top-performing 2% of data yields 86% accuracy, which is 10 points higher than using the bottom 2% and only 4 points below using 100% of the data. This suggests the model learns most effectively from high-quality, relevant examples.

* **The "Bottom" strategy is detrimental.** Training on the lowest-performing data results in poor accuracy, indicating these examples may be noisy, mislabeled, or simply not instructive for the task.

* **The "Random" strategy serves as a control.** Its performance lies between the targeted "Top" and "Bottom" selections, confirming that intelligent data curation ("Top") provides a significant advantage over random sampling, while using harmful data ("Bottom") is worse than random.

* **Practical Implication:** For resource-constrained scenarios, using a small, carefully selected subset of high-quality data (the "Top" strategy) can achieve performance nearly equivalent to using the full dataset, offering a major efficiency gain. The chart argues against using low-quality data, as it actively harms model performance.