### Technical Data Extraction: Singleton Clusters Comparison

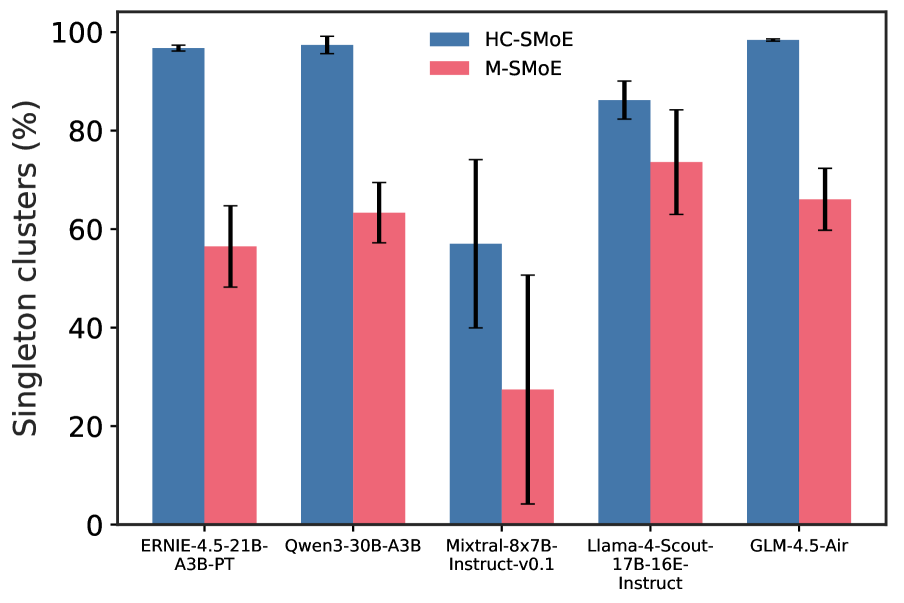

This image is a grouped bar chart comparing the performance of two methods, **HC-SMoE** and **M-SMoE**, across five different Large Language Model (LLM) architectures. The metric measured is the percentage of "Singleton clusters."

#### 1. Axis and Legend Information

* **Y-Axis Title:** Singleton clusters (%)

* **Y-Axis Scale:** 0 to 100, with major markers at 0, 20, 40, 60, 80, and 100.

* **X-Axis Categories (Models):**

1. ERNIE-4.5-21B-A3B-PT

2. Qwen3-30B-A3B

3. Mixtral-8x7B-Instruct-v0.1

4. Llama-4-Scout-17B-16E-Instruct

5. GLM-4.5-Air

* **Legend:**

* **Blue Bar:** HC-SMoE

* **Pink Bar:** M-SMoE

* **Error Bars:** Black vertical lines representing variability or confidence intervals are present on every bar.

#### 2. Data Table (Estimated Values)

The following table reconstructs the data points based on the visual position of the bars and error markers.

| Model Architecture | HC-SMoE (%) | M-SMoE (%) |

| :--- | :---: | :---: |

| **ERNIE-4.5-21B-A3B-PT** | ~97% | ~57% |

| **Qwen3-30B-A3B** | ~98% | ~63% |

| **Mixtral-8x7B-Instruct-v0.1** | ~57% | ~28% |

| **Llama-4-Scout-17B-16E-Instruct** | ~86% | ~74% |

| **GLM-4.5-Air** | ~99% | ~66% |

#### 3. Key Trends and Observations

* **Superiority of HC-SMoE:** In all five tested models, the HC-SMoE method (blue) results in a significantly higher percentage of singleton clusters compared to the M-SMoE method (pink).

* **Highest Performance:** The HC-SMoE method achieves its highest results (near 100%) on the **GLM-4.5-Air**, **Qwen3-30B-A3B**, and **ERNIE-4.5-21B-A3B-PT** models.

* **Lowest Performance/High Variance:** Both methods perform worst on the **Mixtral-8x7B-Instruct-v0.1** model. This model also exhibits the largest error bars, particularly for the M-SMoE method, indicating high instability or variance in that specific architecture.

* **Smallest Gap:** The performance gap between the two methods is smallest for the **Llama-4-Scout-17B-16E-Instruct** model, though HC-SMoE still maintains a clear lead.

* **Consistency:** HC-SMoE generally shows much smaller error bars (higher precision/consistency) than M-SMoE across most models, with the exception of the Mixtral architecture.