## Horizontal Stacked Bar Chart: Question Success by GAIA Categories

### Overview

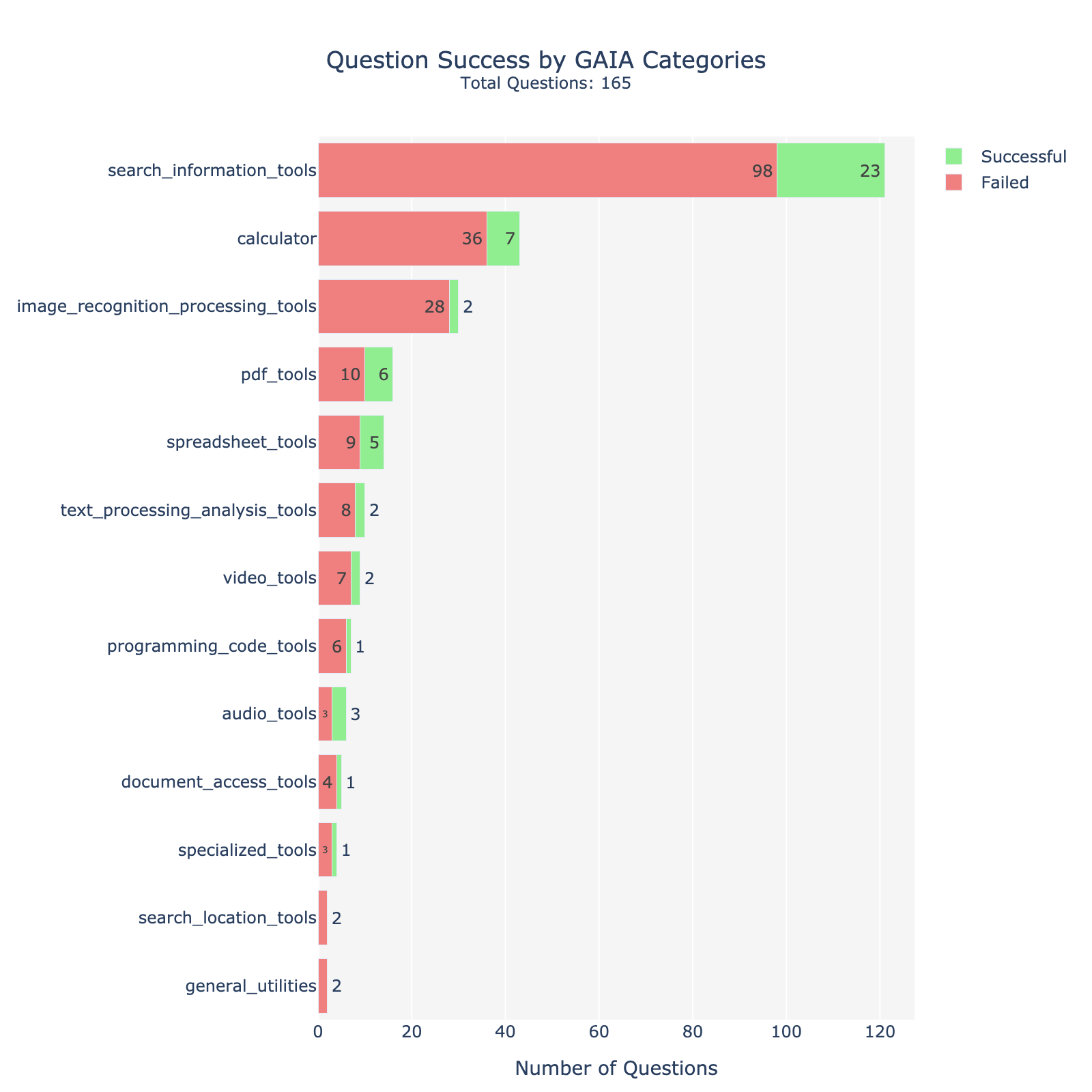

This image is a horizontal stacked bar chart titled "Question Success by GAIA Categories" with a subtitle "Total Questions: 165". It displays the performance (successful vs. failed) of an AI system across 13 distinct tool-use categories from the GAIA benchmark. The chart visually compares the volume of questions per category and the success/failure split within each.

### Components/Axes

* **Title:** "Question Success by GAIA Categories"

* **Subtitle:** "Total Questions: 165"

* **Y-Axis (Vertical):** Lists 13 categorical tool types. From top to bottom:

1. `search_information_tools`

2. `calculator`

3. `image_recognition_processing_tools`

4. `pdf_tools`

5. `spreadsheet_tools`

6. `text_processing_analysis_tools`

7. `video_tools`

8. `programming_code_tools`

9. `audio_tools`

10. `document_access_tools`

11. `specialized_tools`

12. `search_location_tools`

13. `general_utilities`

* **X-Axis (Horizontal):** Labeled "Number of Questions". The scale runs from 0 to 120, with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100, 120).

* **Legend:** Positioned in the top-right corner.

* Green square: "Successful"

* Red (salmon) square: "Failed"

* **Data Representation:** Each category has a horizontal bar composed of two segments:

* **Left Segment (Red):** Represents the count of "Failed" questions.

* **Right Segment (Green):** Represents the count of "Successful" questions.

* The exact count for each segment is printed inside or adjacent to its respective bar segment.

### Detailed Analysis

The following table reconstructs the data presented in the chart. The "Total" column is the sum of Failed and Successful for that category. Note: The sum of all category totals (216) exceeds the stated "Total Questions: 165", indicating that a single question may be evaluated against multiple tool categories, or the "Total Questions" refers to the unique question set size.

| Category (Y-Axis) | Failed Count (Red Bar) | Successful Count (Green Bar) | Total per Category |

| :--- | :--- | :--- | :--- |

| search_information_tools | 98 | 23 | 121 |

| calculator | 36 | 7 | 43 |

| image_recognition_processing_tools | 28 | 2 | 30 |

| pdf_tools | 10 | 6 | 16 |

| spreadsheet_tools | 9 | 5 | 14 |

| text_processing_analysis_tools | 8 | 2 | 10 |

| video_tools | 7 | 2 | 9 |

| programming_code_tools | 6 | 1 | 7 |

| audio_tools | 3 | 3 | 6 |

| document_access_tools | 4 | 1 | 5 |

| specialized_tools | 3 | 1 | 4 |

| search_location_tools | 2 | 0 | 2 |

| general_utilities | 2 | 0 | 2 |

**Visual Trend:** The bars are ordered from longest to shortest, showing a clear hierarchy in the number of questions associated with each tool category. `search_information_tools` is by far the most prevalent category.

### Key Observations

1. **Dominant Category:** `search_information_tools` accounts for the largest volume of questions (121 total), representing over half of all category instances.

2. **High Failure Rates:** The top three categories by volume (`search_information_tools`, `calculator`, `image_recognition_processing_tools`) all exhibit a high ratio of failures to successes. For `image_recognition_processing_tools`, failures outnumber successes 14:1.

3. **Balanced Performance:** `audio_tools` is the only category with an even split (3 Failed, 3 Successful).

4. **Zero Success:** Two categories, `search_location_tools` and `general_utilities`, have no recorded successful questions, though their total question count is very low (2 each).

5. **Success Rate Gradient:** There is no simple correlation between category volume and success rate. For example, `pdf_tools` (16 total) has a much higher success rate (6/16 ≈ 37.5%) than `calculator` (43 total, 7/43 ≈ 16.3%).

### Interpretation

This chart provides a diagnostic breakdown of an AI system's capabilities on the GAIA benchmark, revealing significant performance disparities across different types of tool-use tasks.

* **Core Challenge Area:** The system struggles most with tasks requiring **information search and retrieval** (`search_information_tools`), which are also the most frequently tested. This suggests a fundamental weakness in web search, information synthesis, or tool-use orchestration for open-ended queries.

* **Specialized Tool Proficiency:** The system shows relative strength in tasks involving **PDF manipulation** and **audio processing**, achieving its highest success rates in these less common categories. This may indicate better-trained models or more deterministic tooling for these specific formats.

* **Failure Patterns:** The near-total failure in `image_recognition_processing_tools` and `calculator` tasks points to critical gaps in multimodal understanding and precise numerical reasoning, respectively.

* **Data Implication:** The discrepancy between the sum of category counts (216) and the total unique questions (165) is a key insight. It implies that GAIA questions are **multi-faceted**, often requiring the use of multiple tool types to solve. The system's overall performance is therefore a product of its ability to chain these tools effectively, and its failure in one area (like search) likely cascades to doom complex questions that depend on it.

In summary, the chart doesn't just show success rates; it maps the **topography of the system's reasoning capabilities**, highlighting search, calculation, and image understanding as major valleys, while showing relative peaks in document and audio processing.