## Bar Charts: LLM Performance Comparison

### Overview

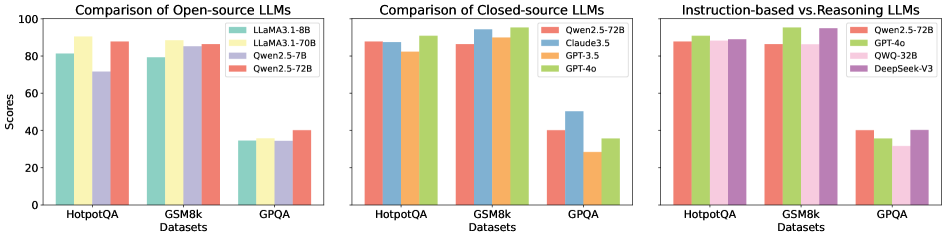

The image presents three bar charts comparing the performance of different Large Language Models (LLMs) on three datasets: HotpotQA, GSM8k, and GPQA. The charts are grouped by LLM type: Open-source, Closed-source, and Instruction-based vs. Reasoning. The y-axis represents scores, ranging from 0 to 100.

### Components/Axes

**General Chart Elements:**

* **Title (Left Chart):** Comparison of Open-source LLMs

* **Title (Middle Chart):** Comparison of Closed-source LLMs

* **Title (Right Chart):** Instruction-based vs. Reasoning LLMs

* **Y-axis Label:** Scores

* **Y-axis Scale:** 0, 20, 40, 60, 80, 100

* **X-axis Label:** Datasets

* **X-axis Categories:** HotpotQA, GSM8k, GPQA

**Left Chart (Open-source LLMs) Legend:**

* **Light Green:** LLaMA3.1-8B

* **Yellow:** LLaMA3.1-70B

* **Lavender:** Qwen2.5-7B

* **Salmon:** Qwen2.5-72B

**Middle Chart (Closed-source LLMs) Legend:**

* **Salmon:** Qwen2.5-72B

* **Orange:** Claude3.5

* **Teal:** GPT-3.5

* **Green:** GPT-4o

**Right Chart (Instruction-based vs. Reasoning LLMs) Legend:**

* **Salmon:** Qwen2.5-72B

* **Green:** GPT-4o

* **Pink:** QWQ-32B

* **Purple:** DeepSeek-V3

### Detailed Analysis

**Left Chart (Open-source LLMs):**

* **LLaMA3.1-8B (Light Green):**

* HotpotQA: ~82

* GSM8k: ~79

* GPQA: ~34

* **LLaMA3.1-70B (Yellow):**

* HotpotQA: ~91

* GSM8k: ~88

* GPQA: ~35

* **Qwen2.5-7B (Lavender):**

* HotpotQA: ~72

* GSM8k: ~85

* GPQA: ~34

* **Qwen2.5-72B (Salmon):**

* HotpotQA: ~88

* GSM8k: ~86

* GPQA: ~40

**Middle Chart (Closed-source LLMs):**

* **Qwen2.5-72B (Salmon):**

* HotpotQA: ~87

* GSM8k: ~86

* GPQA: ~40

* **Claude3.5 (Orange):**

* HotpotQA: ~89

* GSM8k: ~95

* GPQA: ~28

* **GPT-3.5 (Teal):**

* HotpotQA: ~87

* GSM8k: ~91

* GPQA: ~49

* **GPT-4o (Green):**

* HotpotQA: ~92

* GSM8k: ~97

* GPQA: ~36

**Right Chart (Instruction-based vs. Reasoning LLMs):**

* **Qwen2.5-72B (Salmon):**

* HotpotQA: ~88

* GSM8k: ~96

* GPQA: ~30

* **GPT-4o (Green):**

* HotpotQA: ~92

* GSM8k: ~98

* GPQA: ~32

* **QWQ-32B (Pink):**

* HotpotQA: ~89

* GSM8k: ~93

* GPQA: ~28

* **DeepSeek-V3 (Purple):**

* HotpotQA: ~89

* GSM8k: ~95

* GPQA: ~31

### Key Observations

* Across all charts, performance on GPQA is significantly lower than on HotpotQA and GSM8k.

* In the Open-source LLMs chart, LLaMA3.1-70B (Yellow) generally performs better than LLaMA3.1-8B (Light Green).

* In the Closed-source LLMs chart, GPT-4o (Green) and Claude3.5 (Orange) show high performance on HotpotQA and GSM8k.

* In the Instruction-based vs. Reasoning LLMs chart, GPT-4o (Green) consistently scores high on all datasets.

### Interpretation

The charts provide a comparative analysis of LLM performance across different model types and datasets. The lower scores on GPQA suggest that all models struggle with this particular dataset, possibly indicating a higher level of complexity or a different type of reasoning required. The Open-source LLM comparison shows the impact of model size (70B vs. 8B parameters) on performance. The Closed-source and Instruction-based/Reasoning charts highlight the strengths of models like GPT-4o and Claude3.5 in specific tasks. The data suggests that model architecture and training data play a significant role in determining LLM performance on different benchmarks.