## Diagram: Hybrid Neural Network Architecture with Latent Bernoulli Model (LBM)

### Overview

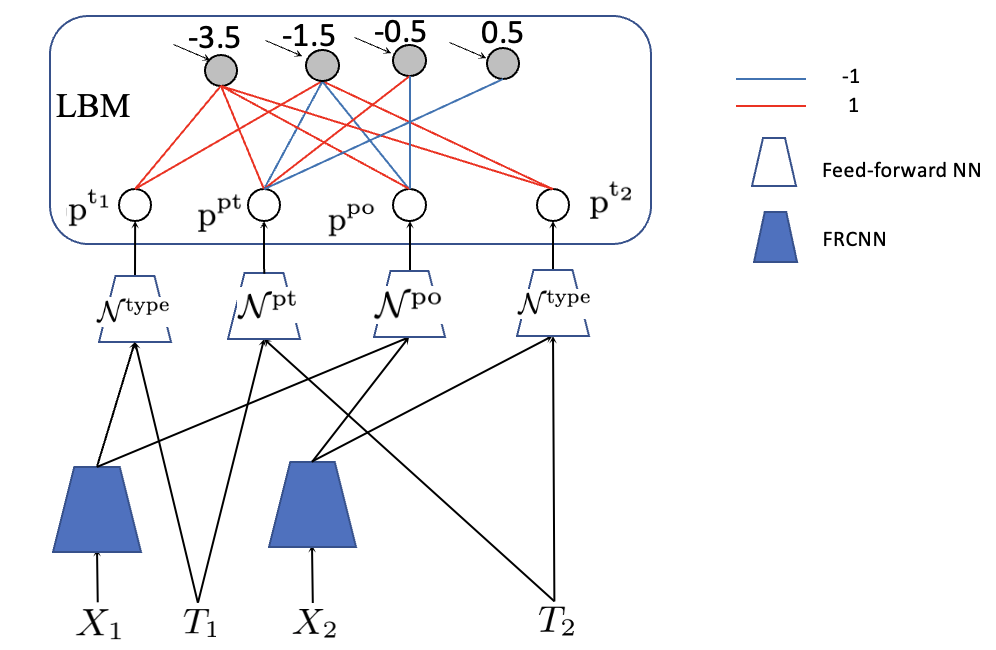

The image displays a technical architectural diagram of a machine learning model. It illustrates a hybrid system that combines a **Latent Bernoulli Model (LBM)** with multiple neural network components, including Feed-forward Neural Networks (NN) and what appears to be Feature Representation CNNs (FRCNN). The diagram shows the flow of data from input variables at the bottom, through various processing layers, to latent variables at the top, with weighted connections indicating relationships.

### Components/Axes

The diagram is organized into distinct layers and components:

1. **Top Layer (LBM Box):**

* A large rounded rectangle labeled **"LBM"** (likely Latent Bernoulli Model).

* Contains four **gray circles** (latent variables) with associated numerical values: **-3.5, -1.5, -0.5, 0.5**.

* Contains four **white circles** (probability nodes) labeled: **p^{t1}, p^{pt}, p^{po}, p^{t2}**.

* **Connections:** The gray circles are connected to the white circles via colored lines. A legend defines:

* **Blue line:** Weight of **-1**

* **Red line:** Weight of **1**

2. **Middle Layer (Neural Network Modules):**

* Four **white trapezoids** labeled as **Feed-forward NN** in the legend.

* Their specific labels are: **N_type, N^{pt}, N^{po}, N_type**.

* Each Feed-forward NN is connected directly above to one of the white probability circles (p^{t1}, p^{pt}, etc.).

3. **Bottom Layer (Input & FRCNN Modules):**

* Two **blue trapezoids** labeled as **FRCNN** in the legend.

* **Input Variables:** Four input labels at the very bottom: **X1, T1, X2, T2**.

* **Connections:**

* The left FRCNN receives input from **X1** and **T1**.

* The right FRCNN receives input from **X2** and **T2**.

* Outputs from both FRCNNs and the raw inputs T1 and T2 are connected to the Feed-forward NNs in the middle layer via a network of black lines.

### Detailed Analysis

**Component Isolation & Flow:**

* **Region 1 - LBM (Top):** This is a probabilistic graphical model. The four gray nodes are latent variables with fixed bias values (-3.5 to 0.5). They influence the probability nodes (p^{t1}, etc.) via binary weights (+1 or -1). For example, the leftmost gray node (-3.5) has a red (+1) connection to p^{t1} and a blue (-1) connection to p^{pt}.

* **Region 2 - Neural Network Processing (Middle):** The probability values from the LBM (p^{t1}, p^{pt}, p^{po}, p^{t2}) serve as inputs or modulators to dedicated Feed-forward Neural Networks (N_type, N^{pt}, N^{po}).

* **Region 3 - Input Processing (Bottom):** Raw input data (X1, X2, likely feature vectors) and possibly text or type data (T1, T2) are first processed by FRCNN modules. The outputs of these FRCNNs, along with the raw T1/T2 data, are then fed into the Feed-forward NNs. The crisscrossing lines indicate that each Feed-forward NN receives combined information from multiple sources (e.g., N^{pt} receives input from the left FRCNN, the right FRCNN, and T1).

**Legend Cross-Reference:**

* The legend is positioned in the **top-right** of the image.

* It accurately defines the two line colors (blue=-1, red=1) used in the LBM box.

* It correctly identifies the white trapezoid as "Feed-forward NN" and the blue trapezoid as "FRCNN". All trapezoids in the diagram match these definitions.

### Key Observations

1. **Hybrid Architecture:** The model explicitly combines a structured probabilistic model (LBM) with deep learning components (FRCNN, Feed-forward NN), suggesting a neuro-symbolic or structured prediction approach.

2. **Asymmetric Latent Biases:** The latent variables in the LBM have a clear progression of bias values from strongly negative (-3.5) to slightly positive (0.5), which will create different prior influences on the connected probability nodes.

3. **Complex Connectivity:** The Feed-forward NNs (especially N^{pt} and N^{po}) act as fusion points, integrating processed features from both FRCNN streams and direct inputs (T1, T2). This suggests these modules are responsible for combining multimodal or multi-source information.

4. **Parameter Sharing:** The label "N_type" appears twice, indicating that the same Feed-forward NN architecture (or possibly shared weights) is used for processing the "type" associated with inputs T1 and T2.

### Interpretation

This diagram represents a sophisticated model designed for tasks requiring both perception (via FRCNNs) and structured reasoning (via LBM). The LBM likely models high-level, discrete latent factors or decisions (e.g., object presence, relationship types) that have a binary (+1/-1) influence on intermediate probabilities. These probabilities, along with rich features extracted by the FRCNNs from raw data (X1, X2), are fused in the Feed-forward networks to make final predictions or generate outputs.

The architecture implies a **top-down and bottom-up information flow**: the LBM provides top-down constraints or priors, while the FRCNNs provide bottom-up data-driven features. The system could be used for tasks like visual relationship detection, where the LBM models the compatibility of object pairs (p^{pt} for "pair type"?) and the FRCNNs extract features from the object images (X1, X2). The numerical biases in the LBM would encode the prior likelihood of different relationship types. The overall goal is likely to perform joint inference over both perceptual data and abstract relational concepts.