## Heatmap: Avg JS Divergence vs. Layer

### Overview

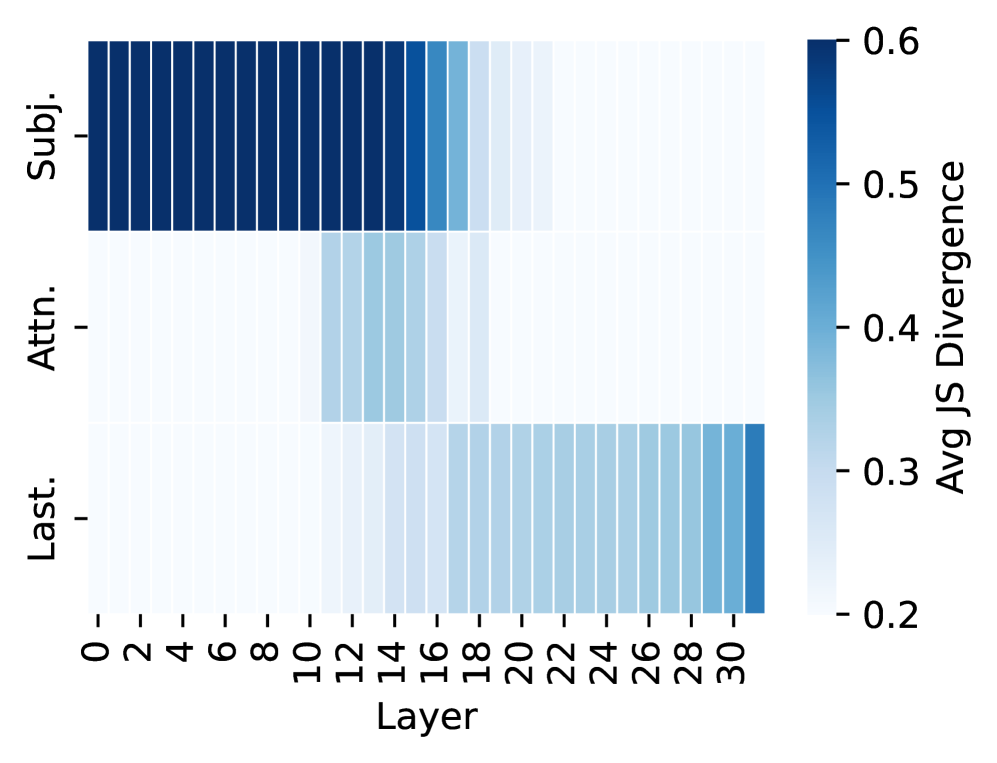

The image is a heatmap visualizing the average Jensen-Shannon (JS) divergence across different layers (0-30) for three categories: "Subj.", "Attn.", and "Last.". The color intensity represents the magnitude of the JS divergence, with darker blue indicating higher divergence and lighter blue indicating lower divergence.

### Components/Axes

* **Y-axis:** Categorical labels: "Subj.", "Attn.", "Last."

* **X-axis:** Layer number, ranging from 0 to 30 in increments of 2.

* **Colorbar (Right):** "Avg JS Divergence" ranging from 0.2 to 0.6. Dark blue corresponds to 0.6, and light blue corresponds to 0.2.

### Detailed Analysis

* **Subj.:** The "Subj." category exhibits high JS divergence (dark blue) from layer 0 to approximately layer 16. After layer 16, the divergence decreases slightly (lighter blue). The JS divergence for layers 0-16 is approximately 0.55-0.6, while for layers 18-30, it is approximately 0.45-0.5.

* **Attn.:** The "Attn." category shows low JS divergence (light blue) from layer 0 to approximately layer 10. From layer 12 to layer 18, the divergence increases (darker blue), reaching a peak around layer 14-16, with a JS divergence of approximately 0.35-0.4. After layer 18, the divergence decreases again to approximately 0.2.

* **Last.:** The "Last." category has low JS divergence (light blue) from layer 0 to layer 18, with a JS divergence of approximately 0.2. From layer 20 to layer 30, the divergence increases (darker blue), reaching a JS divergence of approximately 0.3-0.35.

### Key Observations

* "Subj." has consistently high JS divergence in the initial layers.

* "Attn." shows a peak in JS divergence around layers 14-16.

* "Last." shows an increase in JS divergence in the later layers (20-30).

### Interpretation

The heatmap suggests that the "Subj." category has the most significant divergence in the initial layers of the model, indicating that these layers are crucial for processing subject-related information. The "Attn." category shows a peak in divergence in the middle layers, suggesting that these layers are important for attention mechanisms. The "Last." category shows an increase in divergence in the later layers, indicating that these layers are important for final processing or output generation. The differences in JS divergence across layers and categories highlight the varying roles of different layers in the model for processing different types of information.