## Bar Chart: Communication Rounds for Federated Learning Methods

### Overview

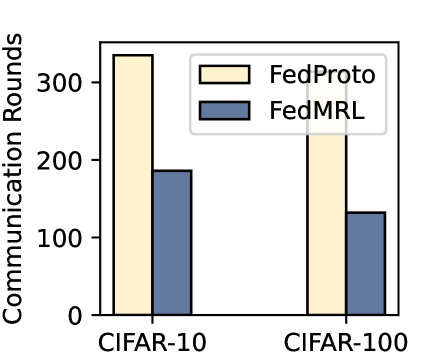

The image is a vertical bar chart comparing the number of communication rounds required by two federated learning methods, **FedProto** and **FedMRL**, across two different datasets: **CIFAR-10** and **CIFAR-100**. The chart visually demonstrates the communication efficiency of the two methods.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Communication Rounds"

* **Scale:** Linear scale from 0 to 300, with major tick marks at 0, 100, 200, and 300.

* **X-Axis:**

* **Categories:** Two primary categories representing datasets: "CIFAR-10" (left group) and "CIFAR-100" (right group).

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Items:**

1. **FedProto:** Represented by a light yellow/cream-colored bar.

2. **FedMRL:** Represented by a medium blue bar.

* **Data Series:** Two bars per dataset category, corresponding to the two methods in the legend.

### Detailed Analysis

**Data Points and Trends:**

1. **CIFAR-10 Dataset (Left Group):**

* **FedProto (Light Yellow Bar):** This is the tallest bar in the chart. Its top aligns slightly above the 300 mark on the y-axis. **Approximate Value: ~330 communication rounds.**

* **FedMRL (Blue Bar):** This bar is significantly shorter than its FedProto counterpart. Its top aligns just below the 200 mark. **Approximate Value: ~190 communication rounds.**

* **Trend:** For the CIFAR-10 dataset, FedProto requires substantially more communication rounds than FedMRL.

2. **CIFAR-100 Dataset (Right Group):**

* **FedProto (Light Yellow Bar):** This bar is shorter than the FedProto bar for CIFAR-10. Its top aligns between the 200 and 300 marks, closer to 200. **Approximate Value: ~230 communication rounds.**

* **FedMRL (Blue Bar):** This is the shortest bar in the chart. Its top aligns just above the 100 mark. **Approximate Value: ~130 communication rounds.**

* **Trend:** For the CIFAR-100 dataset, FedProto again requires more communication rounds than FedMRL. The absolute number of rounds for both methods is lower than for CIFAR-10.

**Cross-Reference Verification:**

* The legend correctly maps the light yellow color to "FedProto" and the blue color to "FedMRL."

* In both dataset groups, the light yellow (FedProto) bar is positioned to the left of the blue (FedMRL) bar.

* The visual trend (FedProto bars are always taller than FedMRL bars) is consistent with the extracted numerical approximations.

### Key Observations

1. **Consistent Performance Gap:** FedMRL consistently requires fewer communication rounds than FedProto across both datasets.

2. **Dataset Complexity Impact:** Both methods require fewer communication rounds on the CIFAR-100 dataset compared to CIFAR-10. This is a notable pattern, as CIFAR-100 is generally considered a more complex classification task (100 classes vs. 10 classes).

3. **Magnitude of Difference:** The performance gap (in absolute rounds) between the two methods is larger for the CIFAR-10 dataset (~140 rounds difference) than for the CIFAR-100 dataset (~100 rounds difference).

### Interpretation

The data suggests that the **FedMRL** method is more communication-efficient than **FedProto** in the context of federated learning on image classification tasks. Communication rounds are a critical resource in federated learning, as each round involves transmitting model updates between clients and a server, consuming bandwidth and time. Therefore, a method requiring fewer rounds to achieve its goal (presumably convergence or a target accuracy) is advantageous.

The fact that both methods show lower round counts on the more complex CIFAR-100 dataset is intriguing and warrants further investigation. It could indicate differences in the experimental setup, convergence criteria, or inherent properties of the methods when dealing with a larger number of classes. The chart effectively communicates that FedMRL offers a potential reduction in communication overhead, which is a key practical consideration for deploying federated learning systems.