## Heatmap: Attention Visualization of a Transformer Model

### Overview

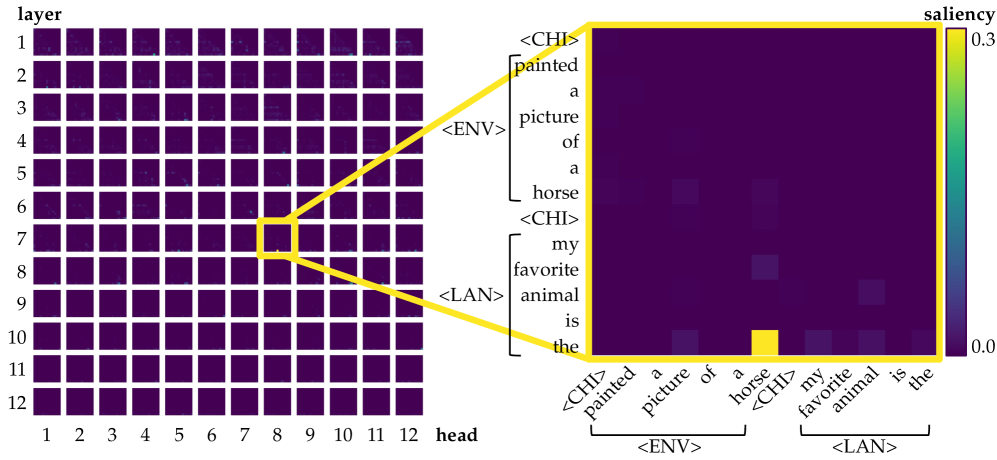

The image presents a visualization of attention weights in a transformer model. It consists of two main parts: a grid of heatmaps representing attention patterns across different layers and heads, and a detailed heatmap showing the attention weights for a specific layer and head with respect to an input sentence.

### Components/Axes

* **Left Grid:**

* X-axis: "head", labeled from 1 to 12.

* Y-axis: "layer", labeled from 1 to 12.

* Each cell in the grid is a heatmap representing the attention pattern of a specific layer and head.

* **Right Heatmap:**

* X-axis: Input sentence: "<CHI> painted a picture of a horse <CHI> my favorite animal is the". The sentence is grouped into spans labeled "<ENV>" and "<LAN>".

* Y-axis: Input sentence: "<CHI> painted a picture of a horse <CHI> my favorite animal is the". The sentence is grouped into spans labeled "<ENV>" and "<LAN>".

* **Colorbar (Saliency):**

* Located on the right side of the right heatmap.

* Ranges from 0.0 (dark purple) to 0.3 (yellow).

* Indicates the attention weight or "saliency" of each cell in the heatmap.

### Detailed Analysis

* **Left Grid:**

* Each small heatmap in the grid shows the attention distribution for a specific layer (1-12) and head (1-12).

* The intensity of the color (ranging from dark purple to yellow) indicates the strength of attention.

* The heatmap at layer 8, head 8 is highlighted with a yellow border, indicating it is the focus of the detailed heatmap on the right.

* **Right Heatmap:**

* The right heatmap visualizes the attention weights between words in the input sentence for layer 8, head 8.

* The sentence is: "<CHI> painted a picture of a horse <CHI> my favorite animal is the".

* The heatmap shows which words the model is attending to when processing each word in the sentence.

* For example, the word "is" has a high attention weight (yellow) to the word "<CHI>" in the same sentence.

* Most of the heatmap is dark purple, indicating low attention weights.

* There are some lighter purple/yellow spots, indicating higher attention weights between specific words.

### Key Observations

* The attention patterns vary across different layers and heads, as seen in the left grid.

* The detailed heatmap shows that the model attends to specific words when processing the input sentence.

* The attention weights are not uniform, indicating that the model focuses on certain relationships between words.

* The word "is" has a high attention weight to the word "<CHI>".

### Interpretation

The visualization provides insights into how a transformer model processes language. The attention mechanism allows the model to focus on relevant parts of the input when making predictions. The heatmap shows that the model learns to attend to specific relationships between words, which is crucial for understanding the meaning of the sentence. The highlighted layer and head (layer 8, head 8) show a specific example of how the model attends to different words in the sentence. The fact that "is" attends to "<CHI>" suggests that the model is relating the subject of the sentence to its properties or context. The overall sparsity of the heatmap suggests that the model is selective in its attention, focusing on the most important relationships between words.