## Heatmap Visualization: Neural Network Attention/Saliency Analysis

### Overview

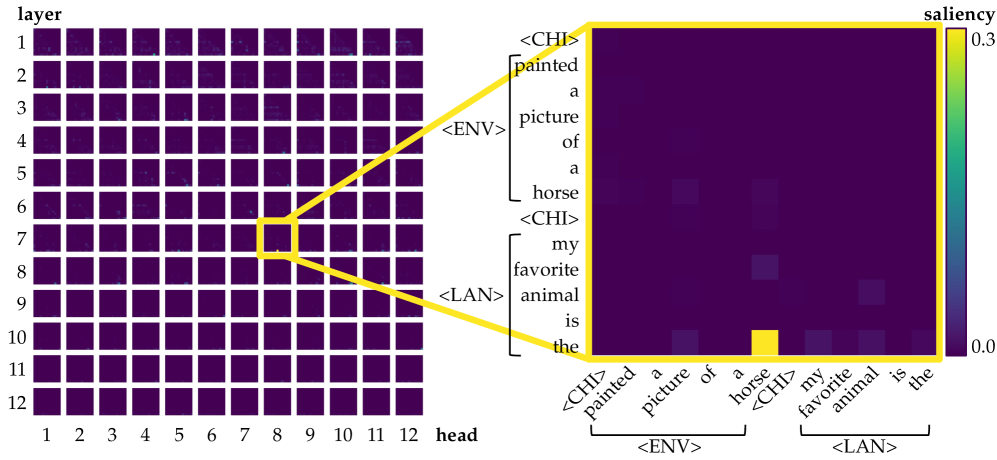

The image displays a two-part technical visualization analyzing attention or saliency patterns within a neural network, likely a transformer model. The left side shows a 12x12 grid representing different layers and heads of the model. A single cell (Layer 7, Head 8) is highlighted and magnified on the right, revealing a detailed token-to-token interaction heatmap. The visualization uses a color scale to represent "saliency" values.

### Components/Axes

**Main Grid (Left Panel):**

* **Y-axis:** Labeled "layer", numbered 1 through 12 from top to bottom.

* **X-axis:** Labeled "head", numbered 1 through 12 from left to right.

* **Content:** A 12x12 grid of small squares. Each square's color represents a value (likely average saliency or attention weight) for that specific layer-head combination. The predominant color is dark purple, with a few scattered lighter squares indicating higher values.

* **Highlight:** A yellow square outline highlights the cell at **Layer 7, Head 8**. Two yellow lines extend from this cell to the right panel, indicating a zoomed-in view.

**Zoomed Heatmap (Right Panel):**

* **Title/Legend:** A vertical color bar on the far right is labeled "saliency". The scale runs from **0.0** (dark purple) at the bottom to **0.3** (bright yellow) at the top.

* **Axes:** This is a square matrix where both the vertical (Y) and horizontal (X) axes represent the same sequence of tokens.

* **Token Sequence (Y-axis, top to bottom):**

1. `<CHI>`

2. `painted`

3. `a`

4. `picture`

5. `of`

6. `a`

7. `horse`

8. `<CHI>`

9. `my`

10. `favorite`

11. `animal`

12. `is`

13. `the`

* **Token Sequence (X-axis, left to right):** Identical sequence to the Y-axis.

* **Group Labels:**

* A bracket labeled `<ENV>` groups the first seven tokens (`<CHI>` through `horse`).

* A bracket labeled `<LAN>` groups the last six tokens (`<CHI>` through `the`).

* **Content:** A 13x13 grid of cells. The color of each cell (i, j) represents the saliency value for the interaction between the i-th token (row) and the j-th token (column).

### Detailed Analysis

**Main Grid Analysis:**

* The grid is overwhelmingly dark purple, indicating that for most layer-head combinations, the measured saliency is near 0.0.

* A few cells show slightly lighter shades of purple/blue, suggesting marginally higher activity. The most prominent of these is the highlighted cell at **(Layer 7, Head 8)**.

* **Trend:** Saliency is highly sparse and localized to specific heads within specific layers.

**Zoomed Heatmap (Layer 7, Head 8) Analysis:**

* **Overall Pattern:** The heatmap is also predominantly dark purple (saliency ~0.0-0.05), indicating weak interactions between most token pairs.

* **Key Data Point:** There is one cell with a very high saliency value, appearing as a bright yellow square.

* **Location:** Row corresponding to the token **"horse"** (7th token) and Column corresponding to the token **"the"** (13th token).

* **Value:** Based on the color scale, this saliency value is approximately **0.3** (the maximum on the scale).

* **Secondary Observations:** A few other cells show faint lighter purple/blue hues (saliency ~0.1-0.15), for example:

* Interaction between "picture" (row 4) and "horse" (column 7).

* Interaction between "animal" (row 11) and "the" (column 13).

* These are significantly weaker than the primary "horse"-"the" interaction.

### Key Observations

1. **Extreme Sparsity:** The model's high-saliency focus is exceptionally concentrated. Out of 144 layer-head pairs, only one (7,8) is highlighted. Within that head's attention map, only one token-token interaction is strongly salient.

2. **Cross-Sentence Link:** The strongest interaction is between a key noun in the first clause ("horse") and a determiner in the second clause ("the"). This suggests the model is forming a strong connection between the subject of the first statement and the beginning of the second statement.

3. **Intra-Clause Weak Links:** Weaker, secondary connections appear within the same clause (e.g., "picture" to "horse") and between the second clause's noun and determiner ("animal" to "the").

4. **Linguistic Structure:** The token sequence appears to be two short sentences or phrases: "[Someone] painted a picture of a horse" and "my favorite animal is the...". The `<CHI>`, `<ENV>`, and `<LAN>` tags likely represent special control or language tokens (e.g., Chinese, Environment, Language).

### Interpretation

This visualization provides a Peircean investigation into the inner workings of a neural language model. It doesn't just show *that* the model processes text, but *how* it allocates its attention resources for a specific input.

* **What the data suggests:** The model, in this specific layer and head, is performing a very targeted operation. It is strongly linking the concept "horse" from the first context (`<ENV>`) to the start of the second context (`<LAN>`), which begins with "the". This could be a mechanism for **coreference resolution** or **topic continuity**, where the model anticipates that "the" will refer back to or be related to the previously mentioned "horse".

* **How elements relate:** The main grid acts as a "map of maps," showing where in the network to look. The zoomed heatmap is the "map" itself, revealing the precise token-level relationships. The color scale is the critical key for quantifying these relationships.

* **Notable Anomalies:** The extreme sparsity is the most notable feature. It indicates highly specialized and efficient processing within this head, rather than a diffuse, distributed pattern. The near-zero values for self-attention (the diagonal from top-left to bottom-right) are also interesting, suggesting this head is primarily focused on *cross-token* relationships rather than reinforcing a token's own meaning.

* **Underlying Information:** The presence of tags like `<CHI>` implies this is a multilingual or multi-context model. The analysis reveals a potential "bridge-building" function between different contextual segments (`<ENV>` and `<LAN>`), which is crucial for coherent multi-sentence understanding.