## Histogram: Distribution of Thinking Token Counts

### Overview

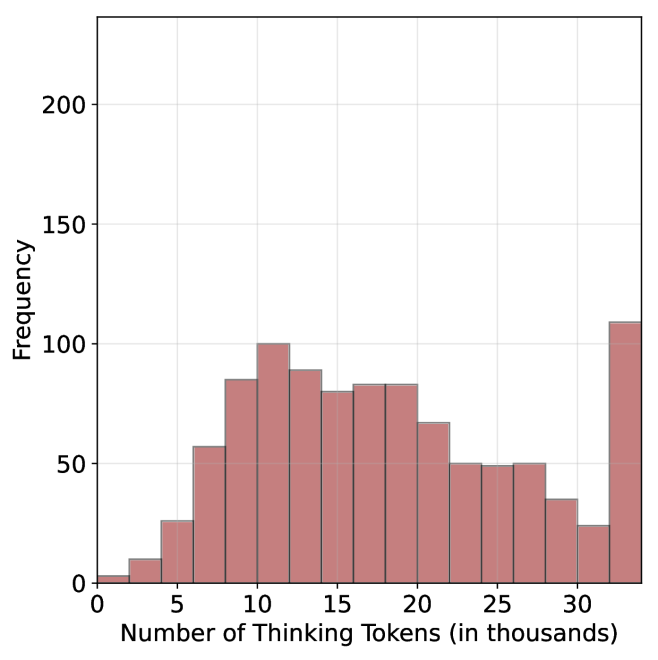

The image displays a histogram showing the frequency distribution of a metric called "Thinking Tokens" measured in thousands. The chart visualizes how often different ranges of token counts occur within a dataset. The overall shape suggests a right-skewed distribution with a notable outlier or separate category at the high end.

### Components/Axes

* **Chart Type:** Histogram (bar chart representing frequency distribution).

* **X-Axis (Horizontal):**

* **Label:** "Number of Thinking Tokens (in thousands)"

* **Scale:** Linear scale from 0 to 30, with major tick marks and labels at intervals of 5 (0, 5, 10, 15, 20, 25, 30).

* **Interpretation:** Each bar represents a bin (range) of token counts. The bins appear to be approximately 2.5 thousand tokens wide.

* **Y-Axis (Vertical):**

* **Label:** "Frequency"

* **Scale:** Linear scale from 0 to 200, with major tick marks and labels at intervals of 50 (0, 50, 100, 150, 200).

* **Visual Elements:**

* **Bars:** Colored in a muted red/salmon shade (`#c27d7d` approximate). Each bar's height represents the frequency (count) of observations within its corresponding token range.

* **Grid:** A light gray grid is present in the background, aligned with the major tick marks on both axes.

* **Legend:** No legend is present, as there is only one data series.

### Detailed Analysis

The histogram consists of 14 distinct bars. The following table reconstructs the approximate data, reading from left to right along the x-axis. Values are estimated based on bar height relative to the y-axis grid.

| Approx. Token Range (thousands) | Approx. Frequency (Count) | Visual Trend & Notes |

| :--- | :--- | :--- |

| 0 - 2.5 | ~5 | Very low frequency. |

| 2.5 - 5 | ~10 | Slight increase. |

| 5 - 7.5 | ~25 | Noticeable increase. |

| 7.5 - 10 | ~55 | Sharp increase. |

| 10 - 12.5 | ~85 | Continued increase. |

| 12.5 - 15 | ~100 | **Peak of the main distribution.** |

| 15 - 17.5 | ~90 | Slight decrease from peak. |

| 17.5 - 20 | ~80 | Further decrease. |

| 20 - 22.5 | ~85 | Slight rebound. |

| 22.5 - 25 | ~65 | Decrease. |

| 25 - 27.5 | ~50 | Plateau. |

| 27.5 - 30 | ~50 | Plateau continues. |

| 30 - 32.5 | ~35 | Decrease. |

| **> 32.5 (or 32.5-35)** | **~110** | **Significant, isolated high bar.** This bar is positioned to the right of the 30k mark, suggesting it represents a bin for tokens >30k or a final bin of 32.5-35k. Its height is the second-highest on the chart. |

**Trend Verification:** The main body of the data (from 0 to ~30k tokens) forms a unimodal, right-skewed distribution. It rises steadily to a peak at the 12.5-15k bin and then gradually declines. The final bar is a clear outlier, breaking the declining trend with a sharp, isolated spike.

### Key Observations

1. **Primary Mode:** The most common range for thinking tokens is between 12,500 and 15,000.

2. **Right Skew:** The tail of the distribution extends further to the right (higher token counts) than to the left, indicating a subset of instances requiring significantly more tokens.

3. **Significant Outlier Bin:** The final bar (representing >30k or 32.5-35k tokens) has a frequency (~110) nearly as high as the main peak (~100). This is not a gradual tail but a distinct, high-frequency cluster at the extreme end of the scale.

4. **Data Range:** The vast majority of observed token counts fall between 5,000 and 30,000.

### Interpretation

This histogram likely represents the distribution of computational effort (measured in "thinking tokens") required for a set of tasks or queries processed by an AI model. The data suggests:

* **Typical Performance:** Most tasks require a moderate amount of processing, clustering around 10,000 to 20,000 tokens, with a central tendency near 13,000-14,000 tokens.

* **Efficiency Tail:** The gradual decline from 15k to 30k shows that progressively fewer tasks require this higher level of effort.

* **Critical Anomaly:** The isolated spike at the far right is the most important feature. It indicates a **non-trivial subset of tasks (over 100 instances) that demand exceptionally high token counts (>30k)**. This could represent:

* A specific, complex category of problem.

* Potential inefficiencies or "hard" cases for the model.

* A separate class of data that was inadvertently included or requires different handling.

* A possible data artifact or binning error (e.g., all values >30k being lumped into one final bin).

**Conclusion:** The system's token usage is not uniformly distributed. While it operates efficiently for most tasks, there is a significant and distinct group of outlier cases that consume resources at a rate far exceeding the norm. Investigating the nature of these high-token tasks would be crucial for optimizing performance, managing costs, or understanding model limitations.