## Line Charts: Performance Comparison of MLA, GDN-H, and Kimi Linear

### Overview

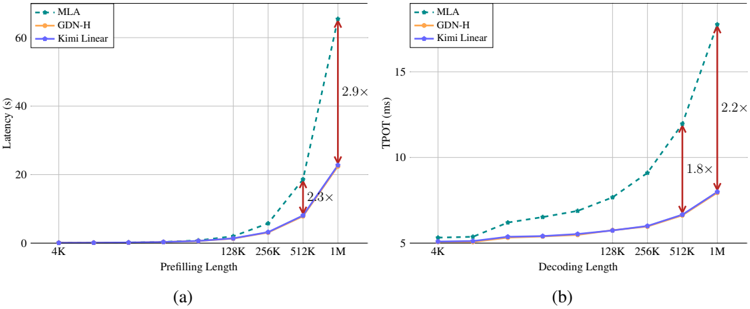

The image contains two side-by-side line charts, labeled (a) and (b), comparing the performance of three methods—MLA, GDN-H, and Kimi Linear—across increasing sequence lengths. Chart (a) measures latency in seconds during the prefilling phase, while chart (b) measures Time Per Output Token (TPOT) in milliseconds during the decoding phase. Both charts demonstrate that Kimi Linear exhibits significantly better scalability than the other two methods as sequence length increases.

### Components/Axes

**Common Elements (Both Charts):**

* **Legend:** Located in the top-left corner of each chart. Contains three entries:

* `MLA`: Represented by a teal, dashed line with circular markers.

* `GDN-H`: Represented by an orange, solid line with diamond markers.

* `Kimi Linear`: Represented by a purple, solid line with square markers.

* **X-Axis:** Represents sequence length on a logarithmic scale. The labeled tick marks are: `4K`, `128K`, `256K`, `512K`, `1M`.

* **Annotations:** Red, double-headed vertical arrows with text labels indicate the speedup factor of Kimi Linear relative to MLA at specific data points.

**Chart (a) Specifics:**

* **Title/Label:** `(a)` is centered below the chart.

* **Y-Axis:** Labeled `Latency (s)`. The scale runs from 0 to 60 with major gridlines at 0, 20, 40, and 60.

* **X-Axis Title:** `Prefilling Length`.

**Chart (b) Specifics:**

* **Title/Label:** `(b)` is centered below the chart.

* **Y-Axis:** Labeled `TPOT (ms)`. The scale runs from 5 to 15 with major gridlines at 5, 10, and 15.

* **X-Axis Title:** `Decoding Length`.

### Detailed Analysis

**Chart (a) - Prefilling Latency:**

* **Trend Verification:**

* **MLA (Teal, dashed):** Slopes upward extremely steeply, showing exponential growth in latency.

* **GDN-H (Orange, solid):** Follows a nearly identical, steep upward trajectory to MLA.

* **Kimi Linear (Purple, solid):** Slopes upward much more gradually, indicating superior scaling.

* **Data Points & Annotations:**

* At `4K`, all three methods have near-zero latency.

* At `512K`, a red arrow between the MLA and Kimi Linear points is labeled `2.3x`. This indicates Kimi Linear's latency is approximately 2.3 times lower (faster) than MLA's at this length. MLA's latency appears to be ~18s, while Kimi Linear's is ~8s.

* At `1M`, a red arrow between the MLA and Kimi Linear points is labeled `2.9x`. MLA's latency is near the top of the chart (~60s), while Kimi Linear's is approximately 20s.

**Chart (b) - Decoding TPOT:**

* **Trend Verification:**

* **MLA (Teal, dashed):** Slopes upward, with the rate of increase accelerating after 128K.

* **GDN-H (Orange, solid):** Follows a similar, slightly less steep upward trend than MLA.

* **Kimi Linear (Purple, solid):** Slopes upward very gradually, maintaining a low TPOT.

* **Data Points & Annotations:**

* At `4K`, all methods start around 5 ms TPOT.

* At `512K`, a red arrow between the MLA and Kimi Linear points is labeled `1.8x`. MLA's TPOT is ~12 ms, while Kimi Linear's is ~6.5 ms.

* At `1M`, a red arrow between the MLA and Kimi Linear points is labeled `2.2x`. MLA's TPOT peaks above 15 ms, while Kimi Linear's is approximately 7 ms.

### Key Observations

1. **Performance Hierarchy:** In both prefilling and decoding, Kimi Linear consistently outperforms MLA and GDN-H, with the performance gap widening dramatically as sequence length increases.

2. **Similarity of Baselines:** The performance curves for MLA and GDN-H are very similar in shape and magnitude across both tasks, suggesting comparable underlying scaling characteristics.

3. **Exponential vs. Linear-like Growth:** MLA and GDN-H exhibit what appears to be exponential growth in cost (latency/TPOT) with sequence length. In contrast, Kimi Linear's growth appears closer to linear or low-order polynomial, which is a fundamental advantage for long-context processing.

4. **Magnitude of Speedup:** The annotated speedups are substantial, reaching nearly 3x for prefilling and over 2x for decoding at the 1M token length.

### Interpretation

These charts provide strong empirical evidence for the efficiency advantages of the "Kimi Linear" method in handling long sequences, a critical challenge in modern AI models. The data suggests that Kimi Linear's architecture fundamentally reduces the computational complexity associated with long-context windows.

* **For Prefilling (Chart a):** The 2.9x speedup at 1M tokens means that processing a very long input prompt would be almost three times faster with Kimi Linear compared to MLA. This directly translates to lower latency for user interactions and reduced computational cost for serving models.

* **For Decoding (Chart b):** The 2.2x reduction in TPOT at 1M tokens means that generating each new token in a long conversation or document is more than twice as fast. This improves the responsiveness of the model during generation and increases throughput.

The near-identical poor scaling of MLA and GDN-H implies they may share a similar algorithmic bottleneck (likely a quadratic attention mechanism). Kimi Linear's curve suggests it successfully mitigates this bottleneck, possibly through a linear attention approximation or a more efficient hardware-aware implementation. The charts are a clear technical demonstration that Kimi Linear enables practical, efficient processing of million-token contexts where other methods become prohibitively expensive.